CRO testing means running structured tests on your website to get more visitors to do the thing you want (buy, sign up, click). Most guides treat it as a fancy word for A/B testing. It’s not. A/B testing is one tool in a kit that includes at least seven methods. Pick the wrong one and you waste your time.

And only 1 in 7 A/B tests produces a clear winner. That’s about a 14% success rate. So your testing strategy matters way more than any single test. Getting the CRO fundamentals right is half the battle. Run the wrong type of test and you’ll wait weeks for a result that never comes.

This guide covers every CRO testing method and when each one works best. You’ll also get a testing plan that doesn’t depend on getting lucky. If you’re brand new, our CRO implementation guide covers the full process from research to first test. Running your first test or trying to figure out why your last ten went nowhere? Start here. (The CRO audit checklist helps you find what to test, and the A/B testing ideas list gives you specific experiments by page type.)

What CRO testing actually is (and why most articles get it wrong)

Here’s what trips people up. Search “CRO testing” and almost every result treats it like a synonym for A/B testing. Show half your visitors one version, half the other, pick the winner. Done.

That’s like saying “cooking” means “grilling.” Grilling is cooking. But so is baking, frying, sautéing, and slow-cooking. Each works better for different situations. Same thing with conversion rate optimization testing.

The full toolkit spans every digital CRO channel (websites, apps, email, landing pages) and includes A/B tests, tests with multiple versions, and tests that change several page elements at once. For mobile apps specifically, testing app conversion funnels involves both store listing experiments and in-app paywall tests. There are also tests that send more traffic to the winner automatically. And tests where you show your page to five people and just ask what they think. No website traffic needed for that last one.

Why does this matter? Say you’re a small business with 5,000 monthly visitors. Someone tells you to run a multivariate test (testing several elements at the same time). You’ll be waiting until next year for results. The right test type could give you an answer in two weeks.

Companies that run CRO tests every month see 1.8x more annual revenue than those that test occasionally. The payoff is real. But only if you’re running tests that actually fit your traffic, your timeline, and what you’re trying to learn.

Our take: The CRO testing world has a jargon problem. Every tool vendor invents new names for the same things. We’ll keep it plain. If a concept needs a technical name, we’ll explain it in normal words first.

7 types of CRO tests (and when each one works best)

Every CRO test on this list answers a different kind of question. Some need lots of traffic. Some need zero traffic. Some give you a rock-solid answer. Others give you a fast, good-enough answer. Here’s how they break down.

A/B testing (split testing)

The bread and butter. You show half your visitors the original page and the other half a changed version. Then you measure which one gets more conversions. That’s it.

Split testing works best when you’re changing one thing at a time. A headline. A button. An image. One change, two versions, clear answer.

Real split testing examples: Highrise (a project management app) tested a new headline on their signup page and saw 47% more paid signups. Clear Within (a skincare brand) moved their “add to cart” button above the fold and got 80% more add-to-carts. Simple changes. Big results. For a deeper look at building a high converting landing page, we break down the elements that matter most. For more proven CRO results, we compiled 12 case studies with trust ratings.

A/B testing is where most people should start. You need moderate traffic (a few thousand monthly visitors to that page) and patience. Plan for at least two weeks per test. If you want to see how A/B testing affects your conversion rate, this is the starting line. Not sure whether you need A/B testing or feature flags? Our comparison of feature flags vs A/B testing explains when each approach fits.

A/B/n testing

Same idea as A/B testing, but with more than two versions. Instead of Version A vs. Version B, you test A vs. B vs. C (and sometimes D, E, F…).

Useful when you have multiple ideas and enough traffic to test them all at once. The catch: every version you add needs more visitors. Three versions take about 50% longer to reach a confident result than two. Four versions take even longer.

Keep it to three or four versions max unless you’re getting serious traffic. Otherwise you’ll be waiting months.

Multivariate testing (MVT)

Instead of testing one change, you test several changes at the same time. Different headlines combined with different images combined with different buttons. Multivariate testing figures out which combination works best.

The upside: you learn more from one test. Studies show multivariate tests increase what you learn long-term by 28% because you understand how elements interact. Maybe a bold headline works great with a simple image but terrible with a busy one. MVT tells you that.

The downside: you need a LOT of traffic. If you’re testing 3 headlines x 3 images x 2 buttons, that’s 18 combinations. Each combination needs enough visitors to give you a trustworthy answer. For most small businesses, that’s not practical.

Think of it like this: A/B testing is asking “which headline works better?” Multivariate testing is asking “which combination of headline, image, AND button works best?” Bigger question, bigger traffic requirement.

Split URL testing

Instead of changing elements on a page, you send visitors to completely different pages. Version A goes to yoursite.com/pricing and Version B goes to yoursite.com/pricing-new. Two totally different designs.

Best for radical redesigns. Hubstaff used a split URL test to compare a completely new page layout. Result: 49% more visitor-to-trial conversions. You can’t test that kind of overhaul with a regular A/B test because you’re changing everything, not just one element.

The trade-off is that when Version B wins, you don’t know exactly WHY. Was it the new headline? The layout? The colors? All of it together? You know WHAT works but not which piece did the heavy lifting.

Multi-armed bandit testing

A regular A/B test splits traffic 50/50 for the entire test. A bandit test (the name comes from slot machines, “one-armed bandits”) is smarter. It starts at 50/50 but automatically shifts more traffic toward whichever version is winning. This is one example of how AI-powered CRO testing automates decisions that used to be manual.

Instead of “wasting” half your traffic on the loser for three weeks, a bandit test reduces that waste day by day. Flash sales, seasonal promotions, homepage headlines that rotate often. That’s where bandits shine. Learn more about this approach in our multi-armed bandit testing guide.

The limitation: bandit tests aren’t great at giving you a definitive, statistically bulletproof winner. They’re built to reduce short-term losses, not to produce publishable research. Our full guide to multi-armed bandit testing covers the trade-offs in detail. If you need a confident “Version B is 23% better, we’re sure,” stick with A/B testing. If you need “send more traffic to whatever’s working right now,” use a bandit.

Preference and click tests (qualitative CRO tests)

You don’t always need website traffic to run a CRO test. Seriously.

Five-second tests show someone your page for five seconds, then ask what they remember. First-click tests see if people can find what they need. Preference tests show two designs side by side and ask which one they’d trust more (and why).

Nielsen Norman Group research found that just 5 people can identify about 85% of usability issues. Five. You can recruit testers from your email list, your social media followers, or even platforms like UserTesting or Lyssna. No website traffic required.

For small businesses with limited traffic, these qualitative tests might be MORE valuable than A/B tests. They answer “is this confusing?” and “do people understand my value proposition?” before you spend weeks on a numbers-based test. These are the same methods that feed UX-informed testing hypotheses.

User session analysis

Heatmaps (visual maps of where people click), session recordings (watching real visitor behavior), and scroll maps (how far people scroll before leaving). Not a test in the traditional sense, but a critical input for CRO testing. We compared the best session replay and click tracking tools if you need help picking one.

Think of it as reconnaissance. Before you decide what to test, you need to know where the problem is. If nobody scrolls past your hero section, testing your footer is pointless. Session analysis tells you WHERE to focus.

The decision matrix: which test to pick

This is the part competitors skip. They list test types but never help you choose.

| Test type | Best for | Traffic needed | Time to results | Confidence level |

|---|---|---|---|---|

| A/B testing | One element, clear question | Moderate (2K+ monthly) | 2-4 weeks | High |

| A/B/n testing | Multiple ideas for one element | High (5K+ monthly) | 3-6 weeks | High |

| Multivariate (MVT) | Element interactions | Very high (20K+ monthly) | 4-8 weeks | High |

| Split URL | Full page redesigns | Moderate (2K+ monthly) | 2-4 weeks | Medium (hard to isolate cause) |

| Bandit testing | Time-sensitive campaigns | Low to moderate | Ongoing | Lower (trades rigor for speed) |

| Preference/click tests | Design validation, UX questions | Zero website traffic | 1-3 days | Qualitative (directional) |

| Session analysis | Finding problems to test | Any | Ongoing | Diagnostic (not a test) |

How to pick the right CRO test for your situation

Forget the theory. Here’s a practical decision guide.

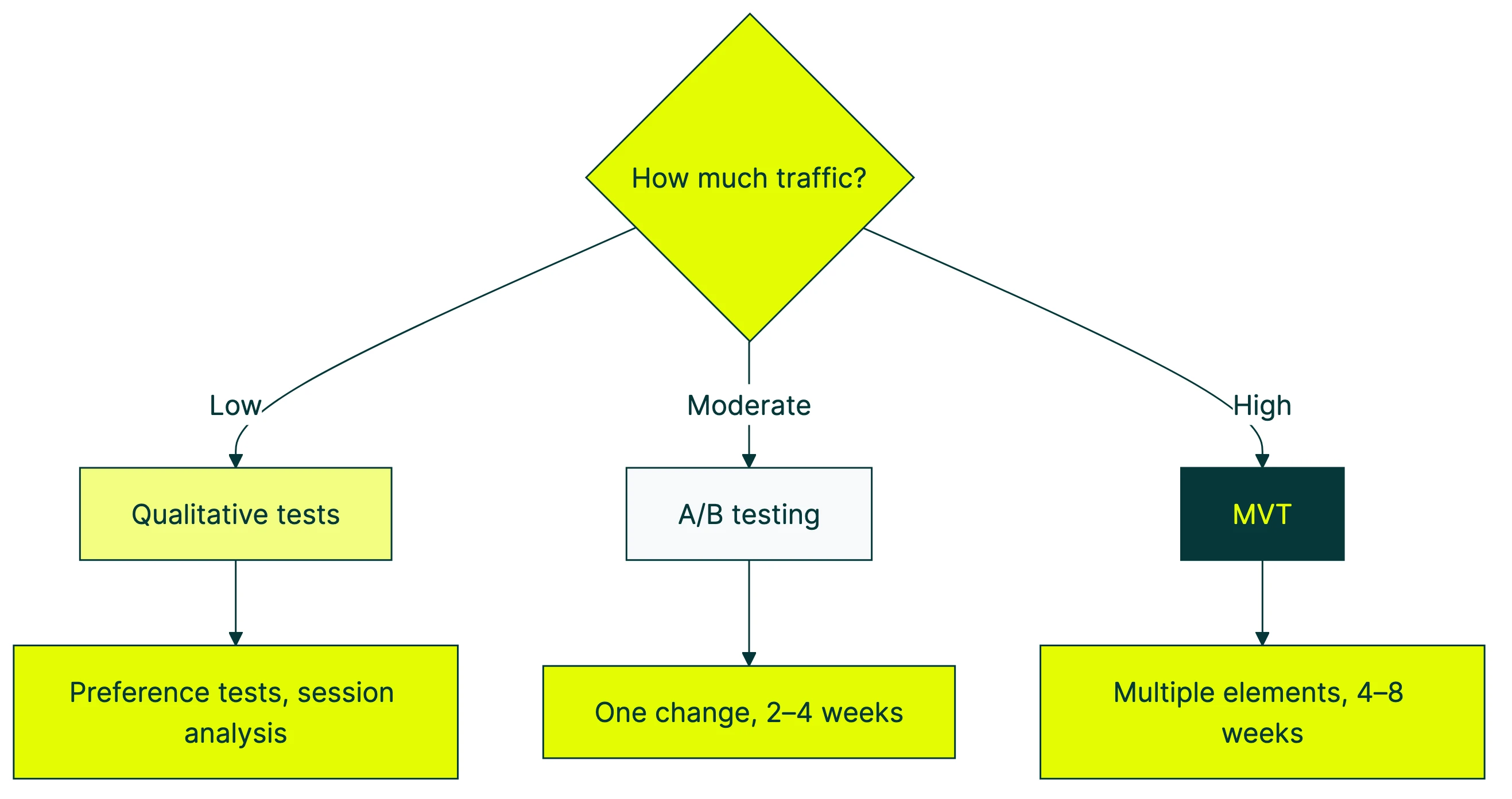

How much traffic does the page get?

Low traffic (under 2,000 monthly visitors to that specific page): stick with A/B testing on big, bold changes. Or use qualitative methods like preference tests and five-second tests that don’t need traffic at all. Don’t waste time on multivariate testing.

Moderate traffic (2,000 to 20,000): A/B testing is your sweet spot. You can also try A/B/n with three versions. Bandit testing works well for short campaigns.

High traffic (20,000+): Everything’s on the table. Multivariate testing finally becomes practical. You can run more nuanced tests with smaller expected differences.

What are you testing?

One element (headline, button, image) → A/B test. Multiple elements at once → multivariate. An entirely new page design → split URL test. Whether your design makes sense → preference or five-second test.

How much time do you have?

Short deadline (a week or less): bandit testing or qualitative tests. Normal timeline (2-4 weeks): A/B testing. No rush: multivariate testing for deeper learning.

How big is the expected change?

Small tweak (button color, font size): you’ll need high traffic and patience. Bold change (new headline, different layout, repositioned form): A/B testing works even with modest traffic. Bigger changes create bigger differences, and those are easier to spot.

Our take: If you’re reading this and you’ve never run a test before, start with an A/B test on your highest-traffic page. Change the headline. That’s it. Don’t overthink test types until you’ve seen how the process works. The simple stuff works.

How to build a CRO testing plan

Random testing is expensive testing. A structured CRO strategy turns gut feelings into structured experiments (sorry, tests) that build on each other. Understanding where testing fits in the CRO process helps you see how each test connects to the bigger research-test-learn loop. Here’s how to make one.

Start with research, not ideas

Everyone has opinions about what to test. “The button should be green.” “The headline is too long.” “We should add testimonials.” Opinions are cheap. Data is useful.

Before you test anything, find out where visitors are actually struggling. Google Analytics shows you which pages have the highest drop-off rates. Check your GA4 key event rate to see which pages convert and which don’t. For online stores, your ecommerce conversion funnel in GA4 is the place to start. Use funnel analysis to find what to test by quantifying exactly how many visitors you lose at each stage. Session recordings show you WHERE on those pages people get confused or give up. And short surveys (one question, not twenty) tell you WHY. Our guide to CRO metrics breaks down which numbers actually predict revenue for your business model.

This is where a CRO audit comes in. It’s detective work: find the pages that are bleeding conversions, then figure out what’s going wrong. Test ideas should come from evidence, not brainstorming sessions.

Peep Laja (founder of CXL, one of the most respected names in CRO) puts it bluntly: testing random ideas is the number one reason tests produce no results. When you’re not sure what to test, start from a list of CRO tips that work , changes that have produced consistent results across real sites.

Write a test idea that includes “because”

Here’s a template that works: “If we [change], then [metric] will [improve/decrease] because [reason based on data].”

That “because” is everything. It’s the difference between guessing and testing. For the full framework, our guide on marketing experiment design covers every step from hypothesis to launch.

Bad: “Let’s test a shorter form.” (Why? Because someone on your team doesn’t like long forms?)

Good: “If we reduce form fields from 8 to 4, then form completions will increase by 15% because our session recordings show 40% of visitors abandon after the phone number field.”

The second version tells you what to test, what to measure, and what success looks like. Booking.com runs thousands of tests per year. The secret is the discipline of writing clear test ideas with data behind them, not the volume.

Prioritize tests with ICE scoring

You’ll always have more test ideas than time. Prioritize using ICE:

- Impact: How big could the win be? (Score 1-5)

- Confidence: How sure are you this will work, based on your data? (1-5)

- Ease: How easy is it to set up and run? (1-5)

Multiply the three scores. Test the highest-scoring ideas first. Simple math, better decisions. This stops you from spending three weeks testing a footer nobody sees. Meanwhile, your pricing page headline is actively confusing visitors.

Set your visitor count and duration before you start

This is where people mess up. You MUST decide how many visitors you need before starting. Otherwise, you’ll peek at results on day three, see a “winner,” and stop the test. (More on why that’s a disaster in the next section.)

Use a sample size calculator or run a power analysis for your A/B tests to figure out your number. Then commit to a minimum of 14 days, even if you hit your visitor count sooner. Why 14 days? Because traffic behaves differently on weekdays vs. weekends. A 5-day test might only capture Monday-through-Friday behavior and miss the weekend pattern completely.

For low-traffic sites: limit yourself to two versions (A vs. B), and test bigger changes. Trying to detect a 2% difference with 3,000 monthly visitors is like trying to hear a whisper at a concert. Test bold changes that create big, detectable differences instead.

Kirro uses math that works with less traffic (called Bayesian statistics), which helps smaller sites get answers faster. But even with better math, you still need enough visitors to trust the result.

Why most CRO tests fail (and what to do about it)

Every other CRO testing guide skips this part.

Ronny Kohavi ran experimentation at Microsoft and Bing. He found that only 10-20% of tests at those companies generate positive results. At Microsoft broadly, one-third of tests help, one-third are neutral, and one-third actually hurt performance. These are the best testing teams in the world.

Google can’t get more than 1 in 5 tests to win. So your 3 failed tests in a row? That’s normal. Not a sign you’re doing it wrong.

The peeking problem

The thing that actually ruins test results? Checking early and stopping when things look good.

Evan Miller’s research showed the damage. Check results daily, stop when you see a “winner,” and your false positive rate jumps from 5% to 26%. One in four “winning” tests is pure noise. Looks real. Isn’t. Understanding statistical errors in A/B testing helps you see exactly why this happens.

Think of it like flipping a coin 10 times, getting 7 heads, and declaring the coin is rigged. You need more flips. Way more flips.

This is the most common A/B testing mistake we see. Someone sets up a test, checks it every morning, sees Version B “winning” on day four, celebrates, and makes it permanent. Two months later, conversions are exactly where they started. The “win” was a mirage.

Bayesian testing approaches handle this better because they account for continuous monitoring. But even with better math, you should set a minimum test duration and stick to it.

What to do about it

Fix 1: Set your test duration BEFORE starting. Write it down. Don’t touch the results until that date.

Fix 2: Test bigger, bolder changes. Small tweaks on low-traffic sites will never reach confidence. Don’t A/B test button colors. Test completely different headlines, layouts, or value proposition examples that reframe your offer entirely.

Fix 3: Document everything. A test that shows your audience doesn’t respond to urgency messaging (“Only 3 left!”) is just as valuable as a winning test. That’s data you can use for every future decision. The ROI comes from the testing program, not individual wins. And if your tests involve page structure or content changes, read up on balancing CRO tests with SEO so a conversion win doesn’t become a rankings loss.

Fix 4: If your testing program keeps stalling, it might be a program problem, not a testing problem. Our guide to building a structured CRO program covers the governance, reporting, and knowledge base that keep testing sustainable. Or consider whether it’s time to bring in a CRO specialist or work with agencies that specialize in CRO testing to audit your process and fix your prioritization.

A losing test that teaches you something beats a “winning” test that was just random noise.

Split testing examples that actually worked

Enough theory. Here are CRO tests that produced real, measurable results.

Headline test (A/B test): The Weather Channel decluttered their page and focused on their core value proposition. Result: 225% more conversions. They didn’t add anything. They removed distractions.

Button placement (A/B test): Clear Within moved their “add to cart” button above the fold (the part of the page visible without scrolling). Result: 80% more add-to-carts. This kind of test works even with moderate traffic. The change is big enough to create a big, measurable difference.

Full page redesign (split URL test): Hubstaff built a completely new version of their signup page and tested it against the original. Result: 49% increase in visitor-to-trial conversions. This needed a split URL test because they changed everything, not just one element.

Then there’s checkout design. Baymard Institute studied dozens of ecommerce sites and found the average site can gain 35% more conversions just from a better checkout flow. Fewer fields. Clearer progress indicators. Guest checkout. For more ecommerce CRO tests like these, we built a dedicated guide.

And CloudSolve? They just rewrote their homepage from tech jargon to plain benefits. 52% more conversions. The product didn’t change. The words did.

Notice the pattern? Every winning test above does one of three things. Simplify (remove clutter). Clarify (make the message obvious). Reposition (move important things where people actually look). The flashy redesigns get attention, but the simple stuff works more often.

CRO testing tools: what you need to get started

You don’t need a dozen tools. You need two categories covered:

A/B testing platform: This is the thing that splits your traffic, shows different versions, and tracks results. Kirro is what we built for this. Set up in 3 minutes. Paste a script, pick a page, change what you want with a visual editor. It uses Bayesian statistics (math that works faster with less traffic) and shows results in plain language. EUR 99/month, unlimited tests. Full disclosure: this is us.

For a full breakdown, check our guides on A/B testing tools, CRO tools, and CRO software. We also have an A/B testing software comparison if you want to go tool by tool.

Visitor behavior tools: Heatmaps, session recordings, and scroll maps. These tell you what to test. Google Analytics 4 gives you the basics for free. Dedicated tools like Hotjar or Microsoft Clarity add visual behavior data.

Optional but helpful: Survey tools for asking visitors “what almost stopped you from buying?” and qualitative testing platforms like Lyssna or UserTesting for preference tests and five-second tests.

In our experience, the winning combo is session recordings to find the problem, then an A/B test to validate the fix. Beats guessing every time. You can set up your first test for free and see the process yourself.

FAQ

What is CRO in testing?

CRO testing (conversion rate optimization testing) is running structured tests on your website to increase the percentage of visitors who convert. A “conversion” could be a purchase, signup, form submission, or any action that matters to your business. CRO testing includes A/B testing, multivariate testing, split URL testing, bandit testing, and qualitative methods like preference tests. A/B testing is the most common method, but it’s just one tool in the toolkit. The goal is always the same: find what works and do more of it.

What types of tests are used in CRO?

Seven main types: A/B tests (comparing two versions), A/B/n tests (comparing three or more versions), multivariate tests (testing multiple elements at once), split URL tests (comparing entirely different page designs), multi-armed bandit tests (automatically shifting traffic to the winning version), preference and click tests (qualitative feedback from real people), and user session analysis (heatmaps and recordings). The right type depends on your traffic volume, what you’re changing, and how much time you have. Most small businesses should start with A/B testing and qualitative tests.

How do I create a CRO testing plan?

Start with data, not opinions. Use analytics to find your highest-traffic pages with the worst conversion rates. Watch session recordings to see where visitors struggle. Then write test ideas using this template: “If we [change], then [metric] will improve because [data-backed reason].” Prioritize ideas using ICE scoring (Impact x Confidence x Ease). For a prioritized list of CRO recommendations organized by impact and effort, start there. And to understand which changes increase conversion rate most, that guide ranks them by measured impact. Before starting each test, calculate the number of visitors you need and commit to a minimum 14-day duration. Document every result, wins and losses alike. The plan should be a living document that builds on what you learn from each test.

How long should a CRO test run?

Minimum 14 days, even if your sample size formula says you’ll reach enough visitors sooner. This accounts for weekly traffic patterns (weekday vs. weekend behavior). Maximum: 6-8 weeks. If there’s no result by then, the difference between your versions is too small to matter. Stop the test, learn what you can, and move on to a bolder change. The single biggest mistake is stopping early when results “look good.” That leads to false positives about 26% of the time.

Can I run CRO tests with low traffic?

Yes, but adjust your approach. Use only two versions (A vs. B) to keep the required visitor count down. Test bigger, bolder changes (don’t test button colors, test entirely different headlines). Consider bandit testing to reduce wasted traffic. And supplement with qualitative tests (preference tests, five-second tests) that don’t need any website traffic at all. Five people can identify 85% of usability problems. Try setting up a simple A/B test on your highest-traffic page and you’ll see how the numbers work for your site. Our B2B conversion rate optimization guide goes deep on low-traffic testing alternatives. For more on the math behind it, check out CRO blogs from practitioners who’ve shared their low-traffic testing approaches.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts