Feature flags are on/off switches that developers put in code to control who sees a new feature. A/B testing shows two versions of something to real visitors and measures which one gets more clicks, signups, or purchases. They solve different problems for different people.

If you’re a marketer testing headlines, you need A/B testing. If you’re an engineer rolling out new code slowly, you need feature flags. If someone on your team told you to “use LaunchDarkly for testing,” they handed you a developer tool when you needed something simpler.

This post covers how feature flags vs A/B testing differ, the testing methodology behind each one, and which tools fit your team. If you’re choosing between the two, client-side vs server-side A/B testing matters for this decision too.

The short answer (and why it matters)

Think of it this way. A feature flag is a dimmer switch for your website’s code. Your developer installs a new checkout flow but keeps it hidden. They flip the switch for 5% of visitors, watch for bugs, then gradually turn it on for everyone. The goal is a safe launch. Nobody’s measuring which version “wins.” They just want to know: does this break anything?

A/B testing is a taste test between two recipes. You show half your visitors one headline and the other half a different headline. After enough people visit, the numbers tell you which one gets more conversions. The goal is learning which version works better. That’s it. No code, no deployment pipeline.

Both can split traffic. That’s where the confusion starts. But splitting traffic for safety (feature flags) and splitting traffic for measurement (A/B testing) are completely different jobs.

Our take: The confusion usually starts when someone Googles “A/B testing tool” and lands on a developer platform. If you don’t write code for a living, you’re in the wrong aisle.

What is a feature flag?

Your developer ships new code but keeps it behind a switch. They can turn it on for 5% of visitors first, watch for bugs, then slowly roll it out to everyone. If something goes wrong, they flip the switch off. No emergency code change needed. No panic.

Most articles treat feature flags as one thing. They’re not. Pete Hodgson wrote the definitive breakdown on Martin Fowler’s site. He identifies four distinct types:

- Release toggles let you ship code that’s hidden until launch day. Your developer pushes the code on Monday but nobody sees it until the switch flips on Thursday. These last days or weeks, then get removed.

- Experiment toggles split visitors into groups to test different experiences. This is the one type that overlaps with A/B testing. Each visitor consistently sees one version.

- Ops toggles are emergency kill switches. Something breaks at 2 AM? Flip the toggle, disable the feature, go back to sleep. These are temporary by design.

- Permission toggles control who gets access to what. Think premium features for paying customers. These can live in your code for years.

Only one of those four types has anything to do with testing. The other three are purely engineering concerns. So when someone says “just use feature flags for A/B testing,” they’re talking about one quarter of what feature flags actually do.

And feature flags come with baggage. Old flags that nobody cleans up create what engineers call “flag debt.” One company shared on Hacker News that 100+ abandoned flags were still loading into their systems. They were eating a gigabyte per second of database bandwidth. Nobody remembered what half of them did.

What is A/B testing?

You show half your visitors the blue button and half the red button. After enough people visit, you know which color gets more clicks. That’s it. The whole concept in two sentences.

What makes A/B testing different from just “trying something new” is the measurement part. You’re not guessing which version worked better. You’re counting. You need enough visitors (the sample size formula tells you how many) and enough time. The math has to account for random chance. Tools like Kirro use Bayesian statistics, which work faster with smaller traffic.

Most marketing A/B testing happens without touching code at all. Visual editor tools let you click on your live page, change a headline or button, and start the test. No developer ticket. No deployment. No feature flags involved.

And it’s not just headlines. You can test pricing page layouts, hero images, button text, form length. Anything your visitors see and interact with. If you want the full picture of how testing types relate, here’s what split testing means and how multivariate testing (testing multiple changes at once) fits in.

According to Market Research Future, 77% of companies run A/B tests on their websites. And the marketing segment accounts for over 45% of all A/B testing software revenue. A/B testing is, by the numbers, a marketing activity. Not an engineering one.

Our take: If you’ve been tracking your conversion rates and wondering whether a different headline would do better, you don’t need feature flags. You need a simple test. Try one on your own site. Takes about three minutes.

When you need one vs the other

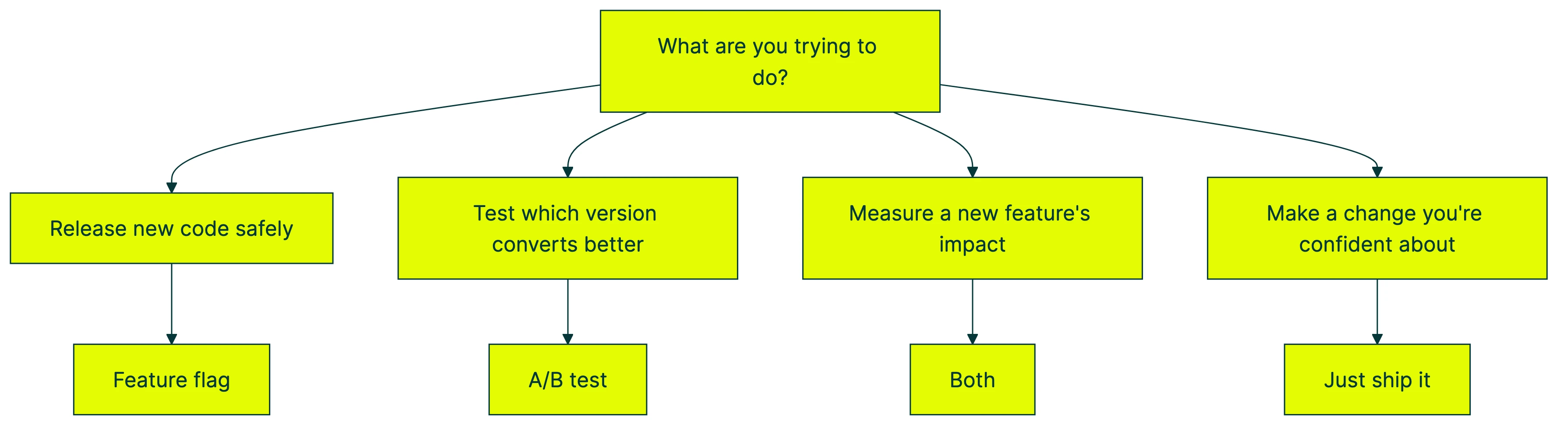

Here’s a quick way to figure out which tool fits your situation:

You need a feature flag when you’re pushing new code and want to control who sees it. Maybe you’re rolling out a new checkout flow to 10% of visitors first. Or you need a kill switch in case something breaks at midnight. These are engineering decisions about deployment safety.

You need A/B testing when you want data on which version performs better. You have two headlines and want to know which gets more signups. You’re designing a marketing experiment with a clear goal. The question isn’t “is this safe to launch?” The question is “which one wins?”

You need both when you’re a product team testing a brand-new feature in production. The feature flag controls who sees the new code. The A/B test measures whether it moves your metrics. Microsoft runs over 10,000 tests per year this way.

You need neither when you’re just making a change you’re confident about. New logo? Just ship it. Fixed a typo? Just ship it. Not every change needs a test or a toggle.

Watch out for the “fake A/B test” trap. Using a feature flag to show different content to different visitors isn’t an A/B test. Not unless you’re randomly splitting traffic and actually measuring results. Without that, you’re just guessing with extra steps. One of the more subtle A/B testing mistakes teams make.

For a quick visual introduction, Fireship explains feature flags in 100 seconds:

The real difference: who owns it and why

Every competitor article skips the most important question: who on your team is actually going to use this?

Feature flags are owned by engineering and DevOps. They care about deployment safety, rollback speed, and keeping their codebase clean. Their tools live inside the code. They configure flags in config files or developer dashboards. The interface assumes you know what a boolean (true/false value) is.

A/B testing is owned by marketing and growth. They care about conversion rates, revenue impact, and customer behavior. Their tools sit on top of the website. They use visual editors to make changes. The interface assumes you know what a button is.

The tooling reflects this split perfectly:

Feature flag tools (built for engineers): LaunchDarkly ($20K+/year), Harness, Unleash, Flagsmith. If you’re evaluating LaunchDarkly competitors, you’re probably an engineering team.

A/B testing tools (built for marketers): Kirro (EUR 99/month), VWO (~$299/month), Crazy Egg. These are the tools with visual editors that don’t require code.

Unified platforms (try to do both): Statsig, GrowthBook, Optimizely, Amplitude. These serve product teams at mid-size and large companies. Browse our full A/B testing software guide for a deeper look.

This scenario plays out constantly: your engineering team sets up LaunchDarkly. Someone says “we can use this for A/B testing too.” They hand you access. You log in. You see a developer dashboard full of code libraries and environment keys. You close the tab.

That’s not a you problem. That’s a wrong-tool problem. It’s like being handed a professional video camera when all you needed was a good phone camera. Both take photos. One of them you’ll actually use.

Feature flag and A/B testing tools compared

The table below covers Statsig vs LaunchDarkly and LaunchDarkly vs Optimizely in one place:

| Tool | Best for | Feature flags | A/B testing | Visual editor | Price |

|---|---|---|---|---|---|

| LaunchDarkly | Engineering teams | Core product | Secondary | No | $20K+/year |

| Statsig | Product and engineering | Free | Core product | No | Pay per event |

| GrowthBook | Developer teams | Core (open-source) | Core | Yes (Pro plan) | Free or $40/user/month |

| Optimizely | Enterprise teams | Yes (Full Stack) | Core product | Yes | ~$36K/year |

| VWO | Marketing teams | Yes (Rollouts) | Core product | Yes | ~$299/month |

| Kirro | Small teams, founders | No | Core product | Yes | EUR 99/month |

A few things jump out.

Statsig vs LaunchDarkly: Completely different business models. Statsig gives feature flags away for free and charges for analytics events. LaunchDarkly charges per “service connection” (basically, per server). In 2024, LaunchDarkly changed its pricing model and some customers saw 350% cost increases, going from $10K/year to $45K/year.

LaunchDarkly vs Optimizely: Different starting points. LaunchDarkly is engineering-first. Flags are the product, testing is a feature. Optimizely is the reverse. Testing is the product, flags are a feature. If you want the full comparison, see our VWO vs Optimizely breakdown and the Optimizely alternatives guide.

Where Kirro fits: We don’t do feature flags. On purpose. If you’re a marketer or founder who wants to test a headline, you don’t need flag management. You need a simple test with a clear answer. That’s what we built. Check out our full list of best A/B testing tools and split testing software if you want to explore more options.

Why the tools are converging (and why small teams should ignore it)

In 2024, Forrester formally named “Feature Management and Experimentation Solutions” as a single market category. The analyst language is dry, but the message is clear: the wall between these tools is coming down.

Every major vendor is adding the other side’s features. LaunchDarkly added experimentation. Statsig gives away flags to sell analytics. Optimizely, Amplitude, and others are building “all-in-one” platforms.

Why? Because feature flags are a trojan horse. Get your code into a company’s codebase through free feature flags, then upsell them to paid experimentation. It’s a smart business model. But it means the “convergence” is driven by vendor strategy, not by what your team actually needs.

Netflix and Microsoft use feature flags and A/B tests as different layers of the same system. At Netflix, feature flags sit behind A/B test assignments. New features hide behind a flag while the testing platform measures their impact. Brilliant engineering. But they have hundreds of engineers making it work.

The numbers back this up. The DORA 2024 report asked 39,000 professionals about their development practices. The top-performing teams are 2.3x more likely to use trunk-based development (everyone pushes code to one shared branch, using feature flags to hide unfinished work). These are big engineering orgs with dedicated platform teams. Probably not your situation.

If you’re a 5-person marketing team or a solo founder, you don’t need a unified platform. You need the simplest tool that answers the question: “did this change work?” Big companies buy Swiss Army knives because they use every blade. You probably just need a really good pair of scissors.

The teams that get the most value from A/B testing aren’t using the fanciest tools. They’re just running tests. The biggest barrier isn’t feature coverage. It’s complexity. Cookieless testing challenges and Google Optimize shutting down already made the tool market confusing enough. Piling feature flag infrastructure on top doesn’t help most marketers.

Our take: If “unified platform” sounds exciting, ask yourself: will my team actually use both sides of it? If the answer is “probably not,” save yourself $20K/year and pick the tool that does the one thing you need.

FAQ

What’s the difference between feature flags and A/B testing?

Feature flags are switches in your website’s code that let developers control who sees a new feature. They’re about safe releases. A/B testing shows two versions of something to real visitors and measures which performs better. It’s about learning what works. Feature flags control the release. A/B testing measures the impact. They overlap when engineering teams use “experiment toggles” to test new features. But for most marketing teams, A/B testing is the only one you need.

Do marketers need feature flags?

Almost never. Feature flags live inside your codebase and require developer involvement to set up and manage. If you want to test a headline, a button color, or a page layout, use a visual A/B testing tool instead. You’ll get results faster and won’t need to file a dev ticket. Ron Kohavi’s research (Trustworthy Online Controlled Experiments, Cambridge University Press) shows that even at Microsoft, 80% of tests don’t show an improvement. You want to run these tests quickly and cheaply. Not through an engineering pipeline.

Can you A/B test without feature flags?

Yes. Most marketing A/B testing tools (like Kirro) work without feature flags entirely. They inject changes through a lightweight script on your website. No code deployment, no developer involvement, no flag management. Feature flags are only necessary for code-level changes. Think a new recommendation algorithm or a redesigned checkout flow built by your engineering team.

Are feature flags a good idea?

For engineering teams shipping code frequently, absolutely. They reduce risk and let you roll back instantly. The DORA 2024 report found that top engineering teams deploy nearly 1,000x more often than low performers. Feature flags make that possible. For marketing teams testing visual changes? Feature flags add complexity you don’t need. Use the right tool for the job.

Should I turn off feature flags?

Yes, eventually. Feature flags that stay in your code after they’ve served their purpose create “flag debt.” Old switches nobody maintains, sitting in your codebase, slowing things down. One company reported on Hacker News that 100+ abandoned flags were saturating their database. Best practice: remove a feature flag once the feature is fully rolled out. Some teams even set expiration dates that block their builds if a flag isn’t cleaned up in time.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts