Multivariate testing lets you test multiple page elements at the same time. Different headlines, images, and buttons, all mixed and matched to find the winning combination. It sounds like the smart play. Test everything at once, find the best combo, move on.

But most websites don’t have enough visitors to make it work.

In our experience running tests for small and mid-size sites, A/B testing (see our A/B testing guide) delivers faster wins for 90%+ of businesses. Multivariate testing (MVT) has its place, but that place is narrower than the testing industry wants you to believe. The right testing methodology depends on your traffic and goals. Whether you choose MVT or A/B testing, our step-by-step experiment design guide helps you structure the test properly from the start.

What is multivariate testing?

Think of it like a recipe. A/B testing changes one ingredient, say the sugar, and keeps everything else the same. Multivariate testing changes the sugar, the flour, AND the butter all at once. Then it figures out which combination tastes best.

Here’s a real multivariate testing example. Say you want to test your landing page. You’ve got:

- 2 headline options

- 3 hero images

- 2 button texts

That’s 2 × 3 × 2 = 12 different versions of your page. Every visitor sees one combination. (Use the sample size calculator to see how traffic requirements multiply with more variations.) After enough data, you learn which specific mix of headline + image + button gets the most conversions.

What makes MVT different: it reveals how elements interact. A headline that crushes it with Image A might flop with Image B. Regular A/B testing can’t catch that. MVT can.

That interaction part is the whole point. You’re not just testing whether headline #2 is better. You’re testing whether headline #2 with image #3 and the blue button creates something special together.

There are three types of split testing, and MVT is the most complex. So is that complexity worth it for your site?

Multivariate testing vs A/B testing

Most A/B testing software also supports multivariate tests. But supporting it and being the right tool for it are different things. Here’s how they actually compare:

| A/B testing | Multivariate testing | |

|---|---|---|

| What you test | One change per test | Multiple elements at once |

| Versions created | 2 (maybe 3-4) | 8, 12, 16, or more |

| Traffic needed | ~19,500 visitors | ~46,300 visitors (for 6 versions) |

| Time to results | Days to weeks | Weeks to months |

| Complexity | Low | High |

| Reveals interactions | No | Yes |

| Best for | Testing big changes fast | Fine-tuning after A/B wins |

Source: Analytics-Toolkit sample size analysis

That traffic difference isn’t small. A 6-version multivariate test needs 138% more visitors than a simple A/B test to reach the same confidence level. And that’s a modest MVT. With 12 or 16 combinations, the numbers get much steeper. More combinations also increase the risk of type 1 errors from multiple comparisons , finding “winners” that are really just noise.

Brian Massey, founder of Conversion Sciences with 20+ years in the field, runs 50 A/B tests for every 1 multivariate test. That ratio tells you something.

Our take: If you haven’t already tested your headlines, buttons, and layouts through A/B testing, start there. MVT is a graduate-level technique. Make sure you’ve passed the intro course first.

The traffic problem most guides won’t tell you about

Every combination in your test needs enough visitors to produce a reliable result. More combinations means exponentially more visitors. Use a power analysis to estimate required traffic before committing to an MVT. The math:

| Test type | Versions | Visitors needed | Compared to A/B |

|---|---|---|---|

| A/B test | 2 | 19,470 | Baseline |

| MVT (small) | 4 | 39,425 | +102% |

| MVT (medium) | 6 | 46,338 | +138% |

| MVT (large) | 12+ | 80,000+ | +300%+ |

Source: Georgi Georgiev, Statistical Methods in Online A/B Testing

Adobe Target published real examples that make this concrete. A site getting 30,000 visitors per day with a 5% conversion rate? That MVT wraps up in 11 days. Fine. A site getting 5,000 visitors per day with a 2% conversion rate? 468 days. Over a year. For one test.

Peep Laja, CXL founder and a well-known voice in conversion rate optimization, put it bluntly: if your site gets 3,000 visits per month with a 7% conversion rate, you’d need 3 years for meaningful multivariate results.

It gets worse. According to SEProfy, 46% of all websites get under 15,000 monthly visitors. The median across all industries? Just 3,930 sessions per month. At that level, even a small 4-combination MVT would take over a year to mean anything.

Bain & Company confirms that useful multivariate testing typically requires at least 50,000 participants. They recommend limiting yourself to 6 to 12 test combinations. More than that and the traffic math breaks down for almost everyone.

There’s a deeper reason the numbers are so brutal. Research from the pharmaceutical industry found that figuring out whether page elements interact with each other (the whole point of MVT) requires 8 times more data than testing whether each element matters on its own. You’re not just testing individual changes. You’re trying to detect chemistry between them.

Quick self-check: open your analytics right now. If the page you want to test gets under 50,000 monthly visitors, A/B testing will give you faster, more reliable results. Not sure? Try a simple A/B test first and get results in days instead of months.

The 70/5 gap: why most teams want MVT but never use it

Kevin Anderson, a former Optimizely employee, ran a LinkedIn poll of 157 testing practitioners. The results tell the whole story:

- 70% said testing tools should support MVT

- Only 5% had run more than 3 multivariate tests

- 77% stick to regular A/B tests for their work

His conclusion? “It’s as if having it is more important than using it.”

This isn’t just one guy’s opinion. AB Tasty, one of the major testing platforms, says the same thing: “In 90% of cases, stick to traditional A/B tests.” And that’s a company that sells multivariate testing.

Why does this gap exist? MVT sounds sophisticated. Testing multiple things at once feels like the smart play. But the practical requirements (huge traffic, dozens of combinations, complex analysis) make it impractical for most marketing teams.

There’s also a design trap. MVT tends to focus on visual tweaks: button colors, image swaps, layout adjustments. Those are rarely the highest-impact changes.

The big wins usually come from changing what your page says, not how it looks. Better copy, a clearer offer, a stronger call to action. You can test all of those with simple A/B tests.

Multivariate testing for UX fine-tuning makes more sense once you’ve already nailed the messaging. But most teams haven’t gotten there yet.

When multivariate testing actually works

MVT isn’t useless. It’s just specific. Multivariate testing in marketing makes sense when all three of these are true:

- Lots of traffic. At least 50,000 monthly visitors to the specific page you’re testing. Not your whole site. That one page.

- You’ve already done the big optimizations. Your headline, your main call to action, your page layout, all already tested and improved through A/B testing. MVT is for fine-tuning, not for finding your first win.

- You suspect elements affect each other. Maybe your headline tone needs to match your image style. Maybe a casual button text only works with a specific layout. If elements genuinely interact, MVT catches what sequential A/B tests might miss.

Here are real multivariate testing examples from companies that had the traffic to pull it off:

- Google tested 41 shades of blue for their link colors. The winning shade generated $200 million in additional revenue. But Google has billions of daily visitors.

- Clarks Shoes ran a 160-variant MVT across their product pages: 60% revenue increase within 12 months, 900% jump in newsletter signups. Major e-commerce traffic made it possible.

- Hyundai Netherlands tested headlines, visuals, descriptions, and testimonials together. Result: 208% click-through rate increase.

- Then there’s NPR, which saw a 10x improvement in conversion (0.18% to 1.91%) testing their “Care Question” call-to-action across different stories.

See the pattern? Every success story involves massive traffic or very patient timelines. Not a coincidence.

If you work with CRO tools, it’s tempting to reach for the most advanced technique. But matching the tool to your traffic matters more than picking the fanciest option. Check our guide on measuring conversion rates in A/B tests first. Make sure the basics are covered.

The opportunity cost nobody talks about

One practitioner on Dynamic Yield wrote something that stuck with us: “I’ve done a lot of multivariate testing over the years, and the results have never, ever, been worth the effort.”

Another practitioner calculated that their first multivariate test would take 53 years at their current traffic to reach confidence. Fifty-three years. For a single test.

Think about Brian Massey’s 50:1 ratio. While one multivariate test collects data, you could run and learn from 50 A/B tests. Each gives you a clear answer in days or weeks. Each teaches you something about your visitors.

Every MVT guide skips this part. It’s not about whether MVT works in theory. It’s about what you’re not doing while you wait.

Sequential testing (running one test after another, with built-in check-in points) is slower per test. But you get useful answers every week or two. With MVT, you might learn something powerful. Eventually. If you have enough traffic. If you set it up right. If you wait long enough.

For most businesses, getting 80% of the way there through quick A/B tests beats chasing the 100% perfect combination through months of multivariate testing.

Our take: Start with A/B testing. Always. Run a dozen tests. Learn what moves the needle on your specific site. If you get to a point where you’ve tested all the big things and your traffic is through the roof, then consider MVT. Kirro supports both, so you can graduate when you’re ready.

How to run a multivariate test (when you have the traffic)

OK, so you’ve got the traffic and you’ve already squeezed the big wins out of A/B testing. Here’s how to set up an MVT that gives you useful results.

Step 1: Check your numbers. Does the page you want to test get 50,000+ visitors per month? If not, run an A/B test instead. Seriously. Check your sample size requirements before you invest the setup time.

Step 2: Pick your elements. Choose 2 to 3 elements on the page. Maybe the headline, the hero image, and the button text. Create 2 to 3 versions of each. Keep it tight. Every additional version multiplies your combinations and your traffic requirement.

Step 3: Choose your test design. There are three approaches (more on these below):

- Testing every single combination (the thorough route)

- Testing a carefully chosen subset (the efficient route)

- Using mathematical shortcuts to reduce combinations (the Taguchi route)

Step 4: Set it up in your multivariate testing software. In Kirro, you’d pick your page, select the elements you want to change, create your versions, and the tool handles the rest. The setup is similar to an A/B test, just with more versions. You can set up your first test in a few minutes.

Step 5: Wait. Each combination needs at least 100 conversions before the data means anything. Smashing Magazine recommends planning for 1,000 visitors per version and 150 conversions per version minimum. Don’t peek early. Don’t stop early. Let the math do its job.

Step 6: Read the results. Look at overall effects first (which headline version won across all combinations?), then look at interaction effects (did any specific combo outperform what you’d expect?). The interactions are where MVT earns its keep.

Common mistakes: Testing too many elements at once. Not having enough traffic (the #1 killer). Stopping early because one version looks like it’s winning. And removing underperforming versions mid-test, which messes up the math. Our guide on common testing mistakes covers these in more detail.

Types of multivariate tests: full factorial vs fractional factorial vs Taguchi

Not all multivariate testing designs work the same way. The differences matter because they determine how much traffic you need and how detailed your results will be.

Full factorial testing means testing every possible combination. If you have 3 headlines and 2 images, you test all 6 combos. Most thorough approach. You get complete data on every combination and every interaction between elements.

The downside? It needs the most traffic by far. Think of it like tasting every dish at a restaurant. You’ll know exactly what you like, but you’ll be there all night.

Fractional factorial testing means testing a smartly chosen subset of combinations. Statistics has ways to pick which combos to test so you still get most of the insights with way less traffic. You won’t catch every subtle interaction, but you’ll catch the big ones.

It’s like sampling five dishes instead of fifty. You learn enough to pick your favorites.

Taguchi testing (named after Japanese engineer Genichi Taguchi) uses pre-built mathematical tables to select specific combinations. Adobe Target uses this approach. It cuts traffic requirements way down.

The tradeoff: IEEE research has shown that it only tests certain combination types and can miss unexpected ways elements affect each other. VWO discourages it, calling it “not theoretically sound” for web testing. That’s harsh, but it does have real limitations.

For multivariate testing in research contexts, full factorial is the gold standard. NIH studies confirm it extracts the most information. For practical website testing, fractional factorial is usually the sweet spot. You get 80% of the insight for 40% of the traffic.

Our recommendation? If you’re going to do MVT at all, fractional factorial is where most businesses should land. Full factorial if you’re Google. Taguchi if you’re truly traffic-constrained but still want to try (just know the tradeoffs).

Should you use multivariate testing or A/B testing?

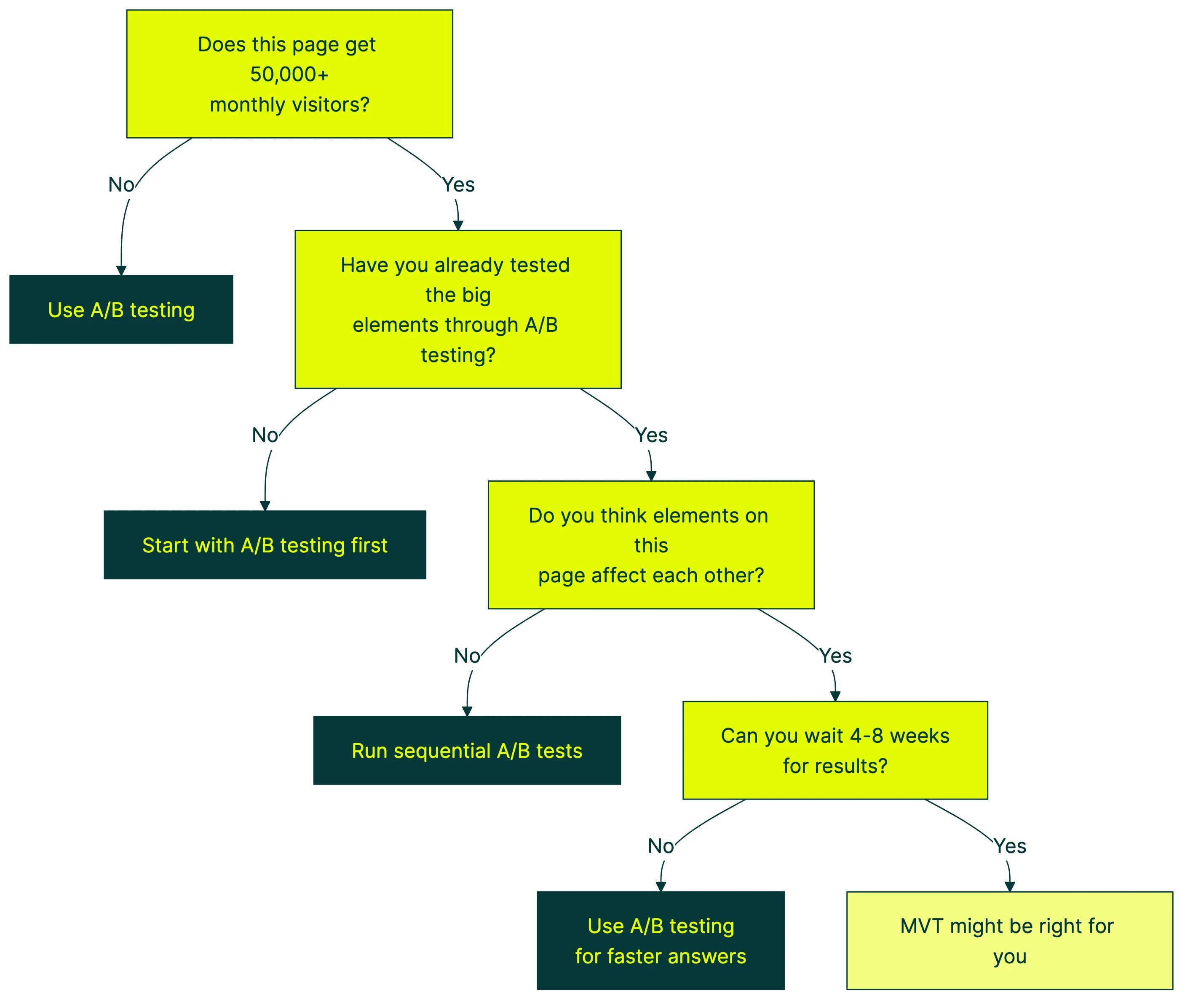

Instead of guessing, walk through this:

The honest answer for most businesses? A/B testing. Master it first. Exhaust the big wins. When A/B tests stop producing lifts and your traffic is through the roof, that’s MVT territory. If traffic is limited but you want to reduce wasted exposure to the losing version, a multi-armed bandit approach is often a better fit than MVT.

The enterprise tools won’t tell you this, but sequential A/B testing (testing one thing, then the next, then the next) gets you 80 to 90% of the way to the “perfect” combination MVT would find. At a fraction of the traffic cost. In a fraction of the time. For a complete map of which CRO test type fits your situation, including A/B, A/B/n, split URL, and bandit tests, that guide covers the decision framework.

One caveat: MVT doesn’t apply to all types of testing. SEO A/B testing works completely differently, and MVT isn’t relevant there. Similarly, mobile app testing often has sample size constraints that make MVT impractical for all but the largest apps.

Ready to start? Pick the right A/B testing tool and run a few simple tests first. You can always graduate to MVT later.

If you’re comparing enterprise options, our VWO vs Optimizely comparison and Optimizely alternatives guide cover the big names that support both. Teams that lost Google Optimize can check our Google Optimize alternatives roundup. And our split testing tools guide covers which platforms handle MVT well.

FAQ

What is multivariate testing?

Testing multiple page elements at the same time (headline + image + button, for example) to find which specific combination gets the most conversions. Unlike A/B testing, which changes one thing at a time, multivariate testing changes several things and measures how they work together.

What is the difference between A/B testing and multivariate testing?

A/B testing changes one element per test. MVT changes multiple elements and reveals how they interact. A/B needs less traffic and gives faster results. MVT needs 2 to 5 times more visitors but shows which combination performs best as a group.

For most sites, A/B testing is the right call. MVT is for high-traffic sites that have already picked the low-hanging fruit. See the comparison table above.

What is an example of a multivariate test?

Testing 3 headlines × 2 hero images × 2 button colors on a landing page. That creates 12 different versions. Each visitor sees one combination at random. After collecting enough data, you learn which specific mix of headline + image + button converts best. You also learn whether certain elements boost or cancel each other out. For instance, maybe a casual headline works great with a friendly photo but poorly with a corporate stock image.

How much traffic do you need for multivariate testing?

At minimum, 10,000 monthly visitors to the page you’re testing. Realistically, 50,000+. Each combination needs at least 100 conversions. With 12 combinations at a 3% conversion rate, that’s roughly 40,000 visitors minimum.

Bain & Company recommends a sample of 50,000+ and limiting yourself to 6 to 12 test combinations. A good testing tool like Kirro shows you whether your traffic can support the test you’re planning, so you know upfront if MVT is realistic.

What is the difference between multivariate testing and ANOVA?

ANOVA (Analysis of Variance) is the math used to analyze multivariate test results. MVT is the testing approach. ANOVA is the tool that tells you which results are real versus random chance.

Think of it this way: MVT is the recipe. ANOVA is the taste test that tells you which version actually tastes better. You don’t need to understand ANOVA to run a multivariate test. Your testing tool handles the math.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts