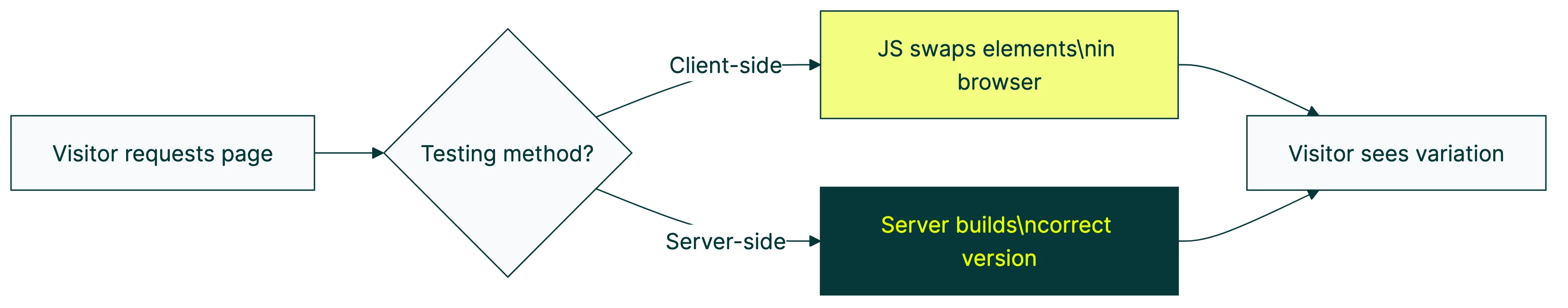

Client-side testing changes what visitors see inside the browser. Server side testing decides what to send before the page even loads. That’s the core difference between client side vs server side A/B testing.

For most small businesses testing headlines, buttons, and landing pages, client-side does the job. Server side ab testing is built for engineering teams running backend tests on pricing logic, algorithms, or mobile apps. It’s powerful, but it comes with a price tag and a developer dependency that most 5-to-50 person companies don’t need.

This guide covers how each approach works, what it costs, and when to switch. Choosing between them is a key testing methodology decision. Including the trade-offs the big testing platforms skip (because they’re trying to sell you both).

What’s the actual difference?

Think of it like a restaurant menu. Client-side testing is putting a sticker over a menu item after the menu is printed. Server-side testing is printing two different menus and handing the right one to each table.

Both approaches run A/B tests. Both split visitors. Both tell you which version wins. The difference is where the work happens. If you’re not sure what split testing means, start there. Otherwise, here’s the side-by-side:

| Client-side | Server-side | |

|---|---|---|

| Where it runs | In the visitor’s browser | On your web server |

| Who sets it up | Marketers (visual editor) | Developers (code changes) |

| What it can test | Headlines, images, buttons, page layout | Pricing logic, algorithms, checkout flows, APIs |

| Setup time | Minutes | Hours to days |

| Developer needed? | No | Yes, for every test |

| Flicker risk | Yes (manageable) | None |

| Works on mobile apps? | No | Yes |

Our take: Most articles on this topic are written by companies selling server-side tools. They’ll tell you “both are complementary.” That’s technically true, but it’s like saying a bicycle and a helicopter are both transportation. Pick the one that matches your trip.

For a visual breakdown, CXL covers the key differences between client-side and server-side testing:

How client-side testing works (and why most tools use it)

Here’s what actually happens when you run a client-side test:

- A visitor loads your page

- A small JavaScript snippet loads with it

- The snippet changes what’s on the page (the tool swaps headlines, button text, images, whatever you set up)

- The visitor sees Version A or Version B

- The tool tracks what happens next

The magic is the visual editor. You click on a headline, type a new one, hit start. No code. No developer tickets. No waiting two weeks for a sprint slot.

That’s why the vast majority of A/B testing software is client-side. It’s the simplest path for the widest audience. Tools like Kirro use this approach because it lets marketing teams run tests on their own, without bugging engineering. It’s also how most no-code platforms handle testing. For example, Webflow split testing relies on client-side tools to avoid touching the underlying code.

Most client-side tools also use math that works with smaller traffic (called Bayesian statistics) to give you answers faster. Results show up in plain language, not p-values.

The trade-off? There’s a thing called “flicker.” More on that in a minute. It’s a bigger deal than it sounds.

How server-side testing works

Server-side testing flips the process. Instead of changing the page after it arrives, your server builds the right version before sending it.

Your developer installs a toolkit in your website’s backend code (the industry calls this an SDK). Then, for each test, they write code that says: “If this visitor is in Group A, show them the blue button. If Group B, show the green one.”

The visitor’s browser gets a finished page. No swapping. No flicker. Clean.

This matters for:

- Backend logic: testing different pricing, search rankings, recommendation algorithms. You can’t change these from the browser because they happen on the server.

- Mobile apps: a JavaScript snippet can’t reach a native iOS or Android app. Server-side is the only option.

- Complex tests: testing multiple things at once (multivariate testing) across several pages is easier to coordinate from the server.

- Feature rollouts: turning a feature on for 10% of visitors, then 50%, then everyone (the industry calls these feature flags). That’s closely related to server-side testing, though they’re different tools for different purposes.

Every test needs a developer. Code changes. Code review. Deployment. Cleanup after the test ends.

Want to test a different headline on your pricing page? That’s a developer ticket, a sprint planning conversation, and a two-week wait. With client-side, it’s a 5-minute job you can do between meetings.

Nobody in the top search results for this topic talks about that cost honestly.

The flicker problem (and why it matters more than you think)

Flicker is what happens when a visitor briefly sees the original page before the test version loads. The page “jumps” or changes mid-load.

Annoying? Yes. And the fix most tools use actually makes things worse.

Most testing tools try to fix flicker with something called an “anti-flicker snippet.” This code hides the entire page until the test loads. Sounds smart. It isn’t.

Web performance researcher Andy Davies found that Gymshark’s page stayed completely blank for 1.7 seconds because of anti-flicker code. DebugBear measured one site where removing the anti-flicker snippet improved load time from 6.0 seconds to 2.7 seconds. That’s a 3.3 second improvement, just by removing the “fix.”

SpeedCurve documented that Google Optimize’s default anti-flicker timeout was 4 seconds. Google’s own “good” threshold for page load (called Largest Contentful Paint, or LCP) is 2.5 seconds. The anti-flicker snippet exceeded Google’s own speed limit before any content appeared.

LCP is a Core Web Vital (Google’s report card for how fast your site loads). A slow LCP hurts your Google rankings. So a heavy testing tool with anti-flicker code means you’re trading SEO performance for testing ability.

Not a great trade if you’re testing to improve conversions you got from organic search.

You don’t need server-side to fix this. You need a lighter tool.

Server-side testing eliminates flicker entirely because the right version loads from the start. But you don’t need to rebuild your testing infrastructure.

Lightweight client-side tools with small scripts (under 10KB) minimize flicker to near-zero. Kirro’s script is 9KB. Enterprise tools load 100-200KB. The flicker problem is mostly a heavy tool problem, not a client-side problem.

If you’re running tests while also caring about cookieless A/B testing and privacy, the script weight matters even more.

Our take: Enterprise testing tools created the flicker problem. Then they sold server-side testing as the solution. That’s like setting your kitchen on fire and selling you a new house.

Who actually needs server-side testing (and who doesn’t)

A paper in Harvard Data Science Review put it plainly: “Most small companies purchase external platforms. Established technology companies build in-house.” Client-side tools are the external platforms. Server-side is what the tech companies build.

You probably need server-side if you:

- Have a dedicated engineering team that wants to run tests

- Test backend logic (pricing, algorithms, search results)

- Have a mobile app

- Run 100+ tests per year

- Operate a large e-commerce site testing checkout flows

You probably don’t need server-side if you:

- Test headlines, buttons, images, or landing pages

- Have a marketing team, not an engineering team

- Run fewer than 10 tests per month

- Don’t have a mobile app

- Want to design a marketing experiment without filing a dev ticket

Booking.com runs over 1,000 concurrent tests at all times. They need server-side infrastructure. LinkedIn runs 400+ tests per day. They need it too.

Your 20-person SaaS company testing two headline variations? Probably not.

Your tools should match your team size. If you’re a marketing team that wants to test a headline in three minutes, client-side is the answer. If your engineering team wants to test backend pricing logic across microservices, server-side is the answer. Different problems, different tools.

What the data actually says about testing more

One of the big selling points of server-side testing is velocity. More tests, faster. The data says otherwise.

Optimizely analyzed 127,000 experiments and found that 88% of A/B test ideas fail. They don’t produce a positive change. The median company runs just 34 tests per year, roughly 3 per month.

Impact per test peaks when teams run 1-10 tests per engineer per year. Beyond 30 tests per engineer, impact drops by 87%.

More tests didn’t mean more wins. It meant more noise.

Ron Kohavi, who led experimentation at Microsoft and Amazon, confirmed that at Google and Bing, only 10-20% of changes produce positive results. At Microsoft overall, two-thirds showed negative or neutral effects.

The lesson? Running 3 good tests beats running 30 sloppy ones. Every time.

The industry narrative says server-side is “the future” and client-side is “the old way.” The data disagrees. Better tests beat more tests. And better tests start with better questions, not fancier infrastructure.

Speero and Kameleoon studied 200+ testing programs and found that some teams who switched entirely to server-side started shifting tests back to client-side. Pure server-side created bottlenecks. Every test needed engineering time, which slowed everything down.

Before you invest in server-side infrastructure to “test faster,” make sure you’re not making common A/B testing mistakes on the tests you already run. Get the basics right first. Nail your sample size formula. Understand your minimum detectable effect.

Then scale.

The real cost comparison

Everyone compares tool prices. Nobody compares the full cost.

Client-side testing costs:

- Tool: EUR 99/month (Kirro) to $399/month (Convert, VWO)

- Setup: Minutes. Paste a script or use Google Tag Manager.

- Per-test cost: Zero. Marketer runs tests independently.

- Hidden cost: Potential page speed impact (minimized with lightweight tools)

Server-side testing costs:

Server-side tools range from free (GrowthBook, open-source) to $36,000+/year (Optimizely). Setup takes days to weeks: SDK installation, backend changes, deployment pipeline updates.

Every test needs code, code review, deployment, and cleanup. And the hidden cost nobody mentions? Engineering time not spent building product features.

The tool subscription is the smallest part of the server-side budget. A developer spending 4 hours on a single test costs more than a month of most client-side tools.

At even $50/hour, that’s $200 per test in developer time alone. Three tests per month and you’re at $600/month in labor, on top of whatever the tool costs.

Server-side testing isn’t free. It’s just billed differently.

For a full list of what’s available, check our Google Optimize alternatives guide. Most of those tools are client-side, and most of them will do exactly what a small team needs.

If you want to set up your first test today, you can be running in under five minutes with Kirro. No developer. No deployment pipeline. No meetings about meetings.

When to consider moving to server-side

Some signals that you’ve outgrown client-side:

- You’re testing backend logic that JavaScript can’t reach

- Your engineering team actively wants to run their own tests

- You have a mobile app and need to test across platforms

- Flicker is measurably hurting your conversion rate

- You need feature flags for safe deployments (see how feature flags compare to A/B testing)

The move usually isn’t all-or-nothing. Most mature testing programs run a hybrid: client-side for marketing tests, server-side for engineering tests. Forrester now defines this as its own category called “Feature Management and Experimentation.”

Most companies never reach this point. Only 0.2% of all websites even run A/B tests. Among the top 10,000 sites, that number jumps to 32%.

The gap between “not testing at all” and “client-side testing” is massive. The gap between “client-side” and “server-side” is much smaller, and only matters for specific use cases.

Start with client-side. Get comfortable running tests. If you outgrow it, you’ll know.

Most never do.

FAQ

What is server-side A/B testing?

Server-side A/B testing means the server decides which version of a page or feature to show before it reaches the visitor’s browser. A developer writes code that assigns each visitor to a group and serves the right version.

This eliminates flicker because the page arrives ready. The trade-off: every test requires developer involvement.

Do I need server-side testing for my website?

For most small businesses testing headlines, buttons, and landing pages: no. Client-side tools handle these tests perfectly well. You need server-side if you’re testing backend logic (pricing algorithms, search ranking), have a mobile app, or run a high-volume program with engineering resources dedicated to testing.

Does client-side A/B testing hurt page speed?

It can, but it depends on the tool. Heavy testing scripts (100-200KB) with anti-flicker snippets can add 1-6 seconds to page load time.

Lightweight tools with small scripts (under 10KB) keep the impact near zero. Kirro’s script is 9KB. Most enterprise tools load 15-20x that amount.

Can I use both client-side and server-side testing?

Yes. Mature testing programs often do. Client-side handles marketing tests (headlines, buttons, images). Server-side handles product tests (features, algorithms, pricing logic).

Running both means two tools, two workflows, and coordination between marketing and engineering. Most small businesses don’t need that yet.

What’s the difference between server-side testing and feature flags?

Feature flags (a way to turn features on or off for specific visitors) are a deployment safety tool. Server-side A/B testing uses similar technology but adds statistical measurement to see which version actually performs better. Related but different. For the full breakdown, see our guide on feature flags vs A/B testing.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts