A CRO program is the system that makes conversion rate optimization ongoing instead of one-off. Not a single audit. Not a random test someone runs when they have an idea. It’s the people, tools, governance, and habits that keep testing running month after month.

Most businesses skip this entirely. They run one test, see no results, and decide testing doesn’t work for them. That’s like going to the gym once, not getting abs, and canceling your membership. The test wasn’t the problem. The lack of a system was.

This guide covers what a CRO program actually includes and why most fail (spoiler: it’s not the tools). Plus how to build one that turns the CRO process into a habit, even if your entire marketing team is just you.

What is a CRO program (and how is it different from just running tests)

There’s a meaningful difference between running a test and running a program. Running a test is: “Let’s try a new headline and see what happens.” Running a program is: “We test one thing every month, document what we learn, and use those results to decide what to test next.”

Three things separate a program from a one-time project:

- It doesn’t end. A project has a finish line. A program is a habit.

- It has governance. That’s a fancy way of saying someone decides what gets tested, when, and how results get shared.

- It builds institutional knowledge. Meaning: you write down what you tested, what happened, and what you learned. So you don’t repeat the same test six months later because nobody remembers.

Think of it like a CRO strategy turning into a living system. The strategy is the plan. The program is the machine that runs the plan. If you haven’t yet, create a conversion optimization strategy before building the program around it. If you’ve been doing digital CRO but haven’t formalized anything, you’re probably running tests without a program. That works for a while. It stops working when you want consistent results.

Why most CRO programs fail (it’s not the tools)

91% of testing programs feel underfunded. 47% lack clear goals. 95% don’t have any kind of education program for the team running them. That’s from VWO and Speero’s 2024 research across hundreds of companies.

The tools aren’t the bottleneck. Stefan Thomke, a Harvard Business School professor who literally wrote the book on this, put it plainly: “The obstacles blocking experimentation are not tools and technology but shared behaviors, beliefs, and values.”

The real killers:

No executive buy-in. The person making decisions doesn’t trust test results. They go with their gut instead. The industry calls this the HiPPO problem (Highest-Paid Person’s Opinion). The CEO says “I don’t like the blue button.” The team changes it, even though Version B converts 15% better. That’s not a program. That’s a suggestion box.

Testing becomes a side project. The thing someone does on Friday afternoons when “real work” is done. Programs starve this way. Slowly, then all at once.

Nobody knows what to do when tests lose. Only about 1 in 7 or 8 A/B tests produces a clear winner. That’s normal. If your team treats a losing test as a waste of time instead of useful information, the program won’t survive its first quarter.

Most “winning” tests are probably wrong

Researchers at Wharton studied how people actually run A/B tests. What they found is uncomfortable. 57% of testers call their tests too early. They see a promising result and stop the test before enough visitors have come through. This is called p-hacking.

The consequence: the percentage of “wins” that are actually just random noise jumps from 33% to 42%. No rules about when a test is “done”? No required number of visitors? Then a big chunk of your “wins” are coin flips that happened to land heads.

This is why governance matters. Without it, you’re making decisions on bad data. And wondering why the “winning” changes don’t hold up in production.

Our take: The fix isn’t fancier stats. It’s simpler rules. Pick one thing you’re measuring. Decide how many visitors you need before the test. Don’t peek. Kirro handles this automatically, but even a sticky note on your monitor works.

The “test more” myth

There’s a popular idea that more tests equals more growth. Sounds logical. It’s wrong.

Optimizely analyzed 127,000 tests across their platform. Impact per test peaks at 1 to 10 tests per year per person. Beyond 30? Expected impact drops by 87%.

Why? Teams measured on win rate start gaming the metric. They run easier, safer tests. More tests, less learning.

The right question isn’t “how many tests can we run?” It’s “what did we learn?”

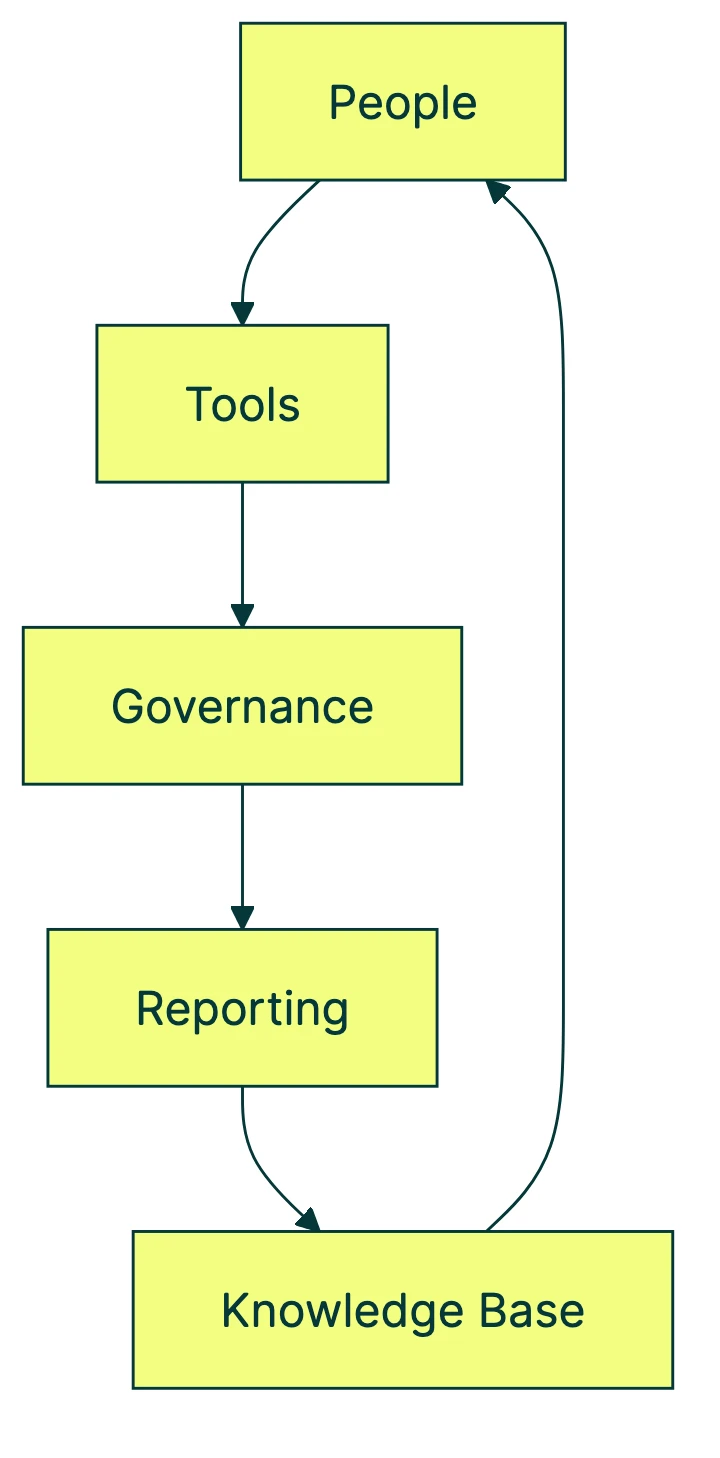

The 5 components of a working CRO program

People

Someone has to own it. For small teams, that’s usually one person wearing multiple hats. Larger teams have three models: centralized (one CRO team serves the whole company), decentralized (each team runs their own tests), or hybrid. Most land on hybrid, where a small core team sets standards and everyone else runs their own tests.

59% of companies at the earliest maturity level don’t have a single dedicated person for testing. That’s not a dealbreaker. You can run a CRO program as a solo marketer. You just need to block time for it like you would for any other recurring task.

Tools

The minimum viable stack: analytics (GA4), behavioral research (heatmaps, session recordings), and an A/B testing platform. That’s it.

90% of leading organizations use a single testing platform across their whole company. And tests that pull data from two or more CRO tools are 18% more likely to produce useful results.

You don’t need enterprise software to start. You need GA4 (free), a heatmap tool, and something that lets you run CRO tests without filing a developer ticket. Tools like Kirro let you set up and run tests in minutes with a visual editor, no code required. Pair that with GA4 and you’ve got a working stack.

If you’re comparing options, we’ve got a full breakdown of A/B testing tools and CRO software.

Governance

This is where programs either mature or stall. Governance means: who decides what gets tested? Who reviews results? How do winning versions get pushed live?

Without this, testing becomes what one author called “random acts of optimization.” Someone tests a headline. Someone else tests a button color. Nobody coordinates. Nobody learns from each other’s work. Follow CRO best practices to keep things structured without making it bureaucratic.

Reporting cadence

Regular rhythm of sharing results across your team. Monthly works for most small teams. Bi-weekly if you’re running tests faster.

62% of programs lack testing-related CRO metrics at the team level. Most teams are running tests but not talking about them.

Sharing results (including the losing ones) is how you build buy-in. Show your boss a monthly report: “we tested three things, one worked, two didn’t, here’s what we learned.” Testing stops feeling like a gamble. Starts feeling like a system.

Knowledge base

A place where you record what you tested, what happened, and what you learned. The most underrated component by far. 58% of programs don’t have one. Zero percent of beginner-stage companies maintain one.

Start simple. A spreadsheet works. Columns: what you tested, what you expected, what happened, what you’ll do differently. That’s your knowledge base version one.

The surprising part: the thing that predicts program maturity most isn’t team size or budget. It’s whether you keep a knowledge base. A solo marketer with a spreadsheet is further along than a 10-person team that never writes anything down.

Our take: If you only do one thing from this whole article, start a test log. Even a Google Sheet with four columns beats a team of five that never documents anything.

Building a culture of experimentation

“Culture of experimentation” sounds like it belongs in a corporate mission statement. For a small team, it’s way less dramatic. Your CEO reads a blog post and wants to swap the homepage headline? Test the new version against the current one. Let the numbers decide.

That’s the whole culture shift.

Booking.com runs 25,000+ tests per year. 75% of their staff actively uses the testing platform. Only 10% of those tests generate positive results. They’re not batting .100 because they’re bad at this. They’re batting .100 because they test everything, including the long shots. Each failure eliminates a bad option. That’s the system working.

You don’t need to be Booking.com. But the principle scales down perfectly.

Thomke describes five stages of experimentation culture: awareness, belief, commitment, diffusion, and embeddedness. For a solo marketer, you can translate those as:

- “I’ve heard of A/B testing” (awareness)

- “I think testing would help my business” (belief)

- “I’m actually running tests” (commitment)

- “Other people on my team are running tests too” (diffusion)

- “We don’t change anything without testing it first” (embeddedness)

Most small businesses are stuck between 1 and 2. Getting to 3 is the hard part. Here’s how:

- Make testing easy enough to be the default. If setting up a test takes two hours and a developer, you’ll never do it regularly. If it takes three minutes, you’ll do it before lunch. Set up your first test with Kirro and see what I mean.

- Share results openly. Wins and losses. When you hide the losing tests, you’re telling your team that testing is only valuable when it “works.” That kills the habit.

- Celebrate learning, not just lifts. “We learned that longer headlines don’t help on mobile” is more valuable than a 2% conversion bump that disappears next month.

Read more about CRO training and CRO blogs if you want to go deeper.

CRO program maturity: where you are and where to go

Other CRO program articles skip this, but your program has a maturity level. Knowing where you sit tells you what to work on next. Instead of trying to do everything at once.

Five levels, adapted from Speero’s research:

Level 1: Ad-hoc. You run a test when someone has an idea. No regular cadence. No documentation. Most small businesses start here.

Level 2: Reactive. You test in response to problems. Bounce rate too high? Test a new layout. Conversion dipping? Test the CTA. Better than level 1, but you’re always playing defense.

Level 3: Structured. You have a testing cadence (say, one test per month). A way to prioritize ideas. A spreadsheet of past results. This is where real compounding starts.

Level 4: Strategic. Testing informs business decisions, not just page layouts. Your conversion optimization strategy ties directly to company goals. Multiple people or teams are involved.

Level 5: Embedded. Testing is the default. Nothing goes live without evidence. Only about 1 in 10 companies reaches this level.

The numbers back this up. 54% of companies now sit at strategic or transformative levels, up from 35% in 2021. Companies with mature testing programs are 69% more likely to report significant growth. And organizations with high testing investment are 350% more likely to achieve significant growth.

Where should you focus based on your level?

| Your level | Focus on | Skip for now |

|---|---|---|

| Level 1 (ad-hoc) | Running your first real test. Just one. | Governance, cross-team alignment |

| Level 2 (reactive) | Setting a monthly testing cadence | Complex prioritization frameworks |

| Level 3 (structured) | Building a knowledge base, sharing results | Enterprise tooling, multi-team coordination |

| Level 4 (strategic) | Connecting tests to business metrics | You’re doing great. Keep going. |

Remember: the strongest predictor of maturity isn’t team size or budget. It’s whether you maintain a knowledge base. That’s actionable even if you’re a team of one.

How to start a CRO program (even if it’s just you)

You don’t need a team, a budget, or permission. You need a page with traffic and a willingness to try something.

Step 1: Pick one page. Choose a page that gets traffic and has a measurable goal (signups, purchases, clicks on something). Run a CRO audit if you’re not sure where to start.

Step 2: Set up your tools. GA4 (free) plus an A/B testing tool. Pair GA4 with something like Kirro: paste one script, use the visual editor to change what you want, and let it run. Try it free. Three minutes.

Step 3: Run your first test. Start with the headline or the main button. These are usually the biggest levers. Check our CRO recommendations for ideas on what to test first.

Step 4: Document what happened. Even in a spreadsheet. What did you change? What did you expect? What actually happened? This is your knowledge base v1. It doesn’t need to be fancy. It needs to exist.

Step 5: Set a cadence. One test per month is enough to start. Block it on your calendar. Treat it like a recurring meeting with your website.

Step 6: Share results with whoever cares. Even if that’s just you writing it down in a Slack channel of one. The act of sharing forces you to think about what you learned. And if you have a boss, a monthly “here’s what we tested” update builds credibility fast.

But I don’t have enough traffic

You might not need as much as you think. Traditional A/B testing math (called frequentist statistics) needs large numbers. But math that works with less traffic (called Bayesian A/B testing) can give you useful answers with smaller visitor counts. If you get 1,000+ visitors per month to a single page, you can start testing. The key is choosing pages with enough traffic to reach a conclusion within a reasonable timeframe.

A CRO program isn’t built by hiring a team and buying enterprise tools. It’s built by testing one thing, learning from it, and doing it again. The program grows from the habit. Not the other way around.

FAQ

What is a CRO program?

A CRO program is the organizational system that makes conversion rate optimization continuous instead of one-off. It includes five components working together: people (who runs it), tools (what you test with), governance (how decisions get made), reporting (how results get shared), and a knowledge base (what you learned from past tests). Without the program, you’re just running occasional tests with no compounding benefit.

How do you build a CRO program from scratch?

Start small. Pick one page with traffic. Set up GA4 and an A/B testing tool. Run one test. Write down what happened. Set a monthly cadence. The program grows from the habit, not from a planning document. Most successful programs started with a single person running a single test and documenting the result.

What does a CRO program consist of?

Five components: people (who owns testing), tools (your analytics and testing stack), governance (who approves tests, who reviews results, how winners get implemented), reporting cadence (regular sharing of results), and a knowledge base (a record of every test and what you learned). Research shows the knowledge base is the strongest predictor of program maturity.

How much traffic do I need to start a CRO program?

There’s no universal minimum. Bayesian A/B testing methods work with smaller traffic than traditional approaches. If you get 1,000+ visitors per month to a single page, you can start testing. The key is choosing high-traffic pages so your test reaches a conclusion in a reasonable timeframe. Don’t let the “not enough traffic” excuse stop you from starting.

How do you create a culture of experimentation?

Make testing the default before changing anything on your website. Share results (wins and losses) openly. Celebrate what you learned, not just conversion lifts. For small teams, this is a habit more than a governance structure. The shift happens when “let’s test it” becomes the automatic response to “I think we should change the homepage.”

CRO process vs CRO program: what’s the difference?

Understanding the CRO process within each cycle (the step-by-step workflow for running individual tests from research to hypothesis to analysis) is essential before building the program around it. A CRO program is the organizational shell that makes that process sustainable. The team, tools, governance, and culture that keep it running month after month. Process is how you run one test. Program is how you keep testing for years.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts