Your free trial conversion rate is the percentage of trial signups who become paying customers. For most SaaS products, that number lands between 8% and 25% without a credit card requirement, and 30% to 60% with one. That range is massive. And it’s massive because most benchmark articles lump every trial type together like they’re the same thing.

They’re not. And that confusion is costing you clarity.

This guide breaks down free trial conversion rate by what actually matters: trial model, trial length, product type, and company stage. The formula is simple. The benchmarks aren’t. And the tactics that move the number are surprisingly specific. It’s all part of conversion rate optimization for SaaS.

What is free trial conversion rate

The formula is straightforward. If you want the full walkthrough on how to calculate conversion rate, we’ve got a separate guide. But here’s the short version:

Free trial conversion rate = (Paying customers ÷ Total trial signups) × 100

Say 200 people sign up for your free trial this month. 30 of them become paying customers. Your free trial conversion rate is 15%.

This metric sits right in the middle of your conversion funnel. It’s different from your website-to-signup rate (that’s top of funnel) and your retention rate (that’s what happens after they pay). It measures one specific handoff: did the person who tried your product decide it was worth paying for?

It belongs in any serious conversion rate metrics dashboard alongside metrics like your lead-to-customer conversion rate, because it directly controls your revenue math. Spend $50 to acquire a trial signup and convert at 10%, each paying customer costs you $500. Bump that to 20%, same customer costs $250. Same traffic. Half the cost.

That’s why a 5-percentage-point improvement can mean a 50% revenue increase from the same trial volume.

Our take: Most people obsess over getting more traffic. We’d rather help you convert the traffic you already have. A better trial experience beats a bigger ad budget almost every time.

Free trial to paid conversion rate benchmarks

Every article you’ve read probably gave you a single “average” free trial to paid conversion rate. Something like 15% or 25%. Those numbers aren’t wrong, exactly. They’re just useless without context.

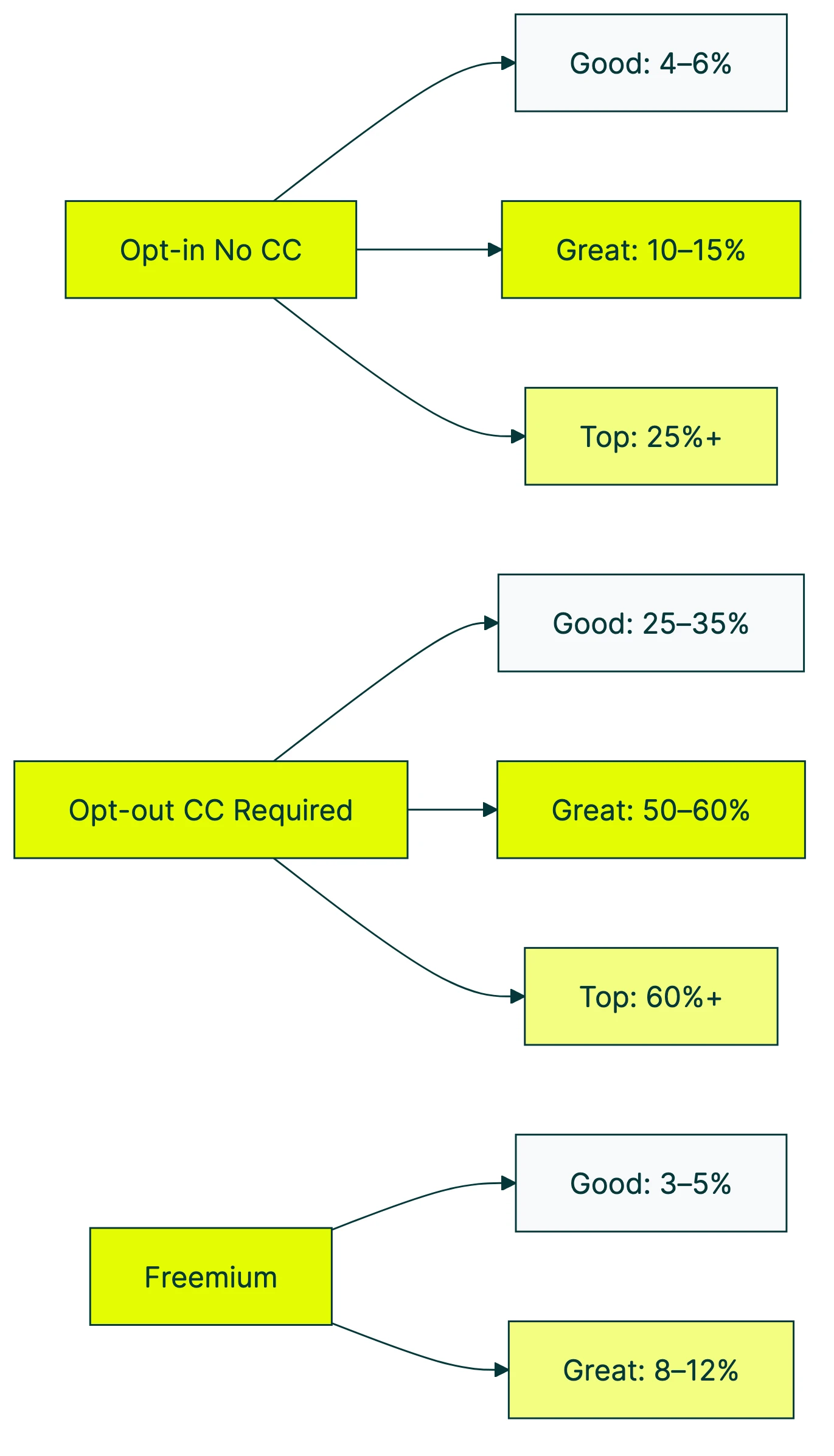

The biggest variable is your trial model. ChartMogul’s 2026 SaaS Conversion Report looked at 200 B2B software products:

| Trial model | Good | Great | Top performers |

|---|---|---|---|

| Opt-in (no credit card) | 4–6% | 10–15% | 25%+ |

| Opt-out (credit card required) | 25–35% | 50–60% | 60%+ |

| Freemium | 3–5% | 8–12% | 15%+ |

That’s a 5x gap between opt-in and opt-out trials. Not a slight difference. Five times.

Why? Because asking for a credit card filters out casual browsers. The people who enter payment details are already leaning toward paying. That’s selection bias doing the heavy lifting, not product magic.

Here’s how it breaks down by industry, according to Baremetrics:

| Industry | Median trial conversion |

|---|---|

| CRM software | ~29% |

| Developer tools | ~24% |

| Marketing tools | ~18% |

| B2B SaaS (overall) | 18.5–25% |

| B2C SaaS | 10–20% |

| Healthcare SaaS | ~11% |

| Enterprise ($100K+ deals) | ~5% |

If you’re doing B2B conversion rate optimization, your numbers will look different from a consumer app. Enterprise deals with six-figure price tags naturally convert fewer trials. Not because the product is worse. The buying decision just involves more people, more budget approval, and more “let me circle back on that.”

For mobile app conversion rates, the numbers look different. RevenueCat’s data shows 38% median trial-to-paid across apps with trials. Top quartile hits 60%+. Mobile tends to run higher because trials are shorter and payment flows have less friction.

Why your benchmarks don’t match anyone else’s

Comparing your free trial conversion rate to a generic benchmark is like comparing your gas mileage to “the average car.” A Prius and a pickup truck are both cars. The average of their fuel economy helps nobody.

A few variables do most of the work:

Trial model is the biggest factor. We covered this above. Opt-in vs opt-out creates a 5x gap on its own.

Company stage matters too. ChartMogul found a 10x difference between the bottom 20% and top 20% of self-serve SaaS products. Early-stage companies with weak onboarding sit at one end. Mature products with polished first-run experiences sit at the other.

Traffic source changes everything. Organic visitors who searched for your product convert differently than people who clicked a Facebook ad. Your click conversion rate from ads is a completely different beast than your organic signup rate.

Product complexity plays a role. A simple tool with a clear value proposition (change this, see results) converts trials faster than a platform that requires data integration, team setup, and a 3-week implementation.

And then there’s the number you’re measuring. Some articles report visitor-to-paid (the full funnel from click to purchase). Others report trial-to-paid (mid-funnel). Others report total-users-to-paid, which includes freemium signups who never intended to pay.

These are all “conversion rates.” They’re also completely different numbers.

When someone tells you “the average free trial conversion rate is X%,” your first question should be: average of what?

The Day 0 problem: when trial conversion is really decided

This is the finding that most benchmark articles skip entirely. And it changes how you should think about trial conversion.

RevenueCat’s data shows that 55% of all trial cancellations happen on Day 0. The same day the person signed up. Not Day 7. Not at the trial deadline. Day 0.

And it gets more interesting. 80% of all trial starts happen on Day 0 too. People sign up for your trial and decide its fate in the same session.

Samuel Hulick, founder of UserOnboard, puts it more bluntly: 40–60% of people who start a free trial never log in a second time. They sign up, poke around, and vanish.

So your carefully crafted 7-day drip sequence? It’s landing in the inbox of someone who already decided on Day 0 that your product wasn’t for them. That email on Day 3? They’ve already moved on.

The flip side is encouraging. Baremetrics reports that trial users who complete key setup steps within 3 days are 3–4x more likely to convert. The industry calls these “activation metrics” (the specific actions that predict whether someone will pay).

The CRO metrics that matter aren’t how many days someone has left. They’re whether someone actually used the product.

Your job isn’t to give people time. It’s to help them succeed fast.

Our take: We built Kirro with a 30-day trial and no credit card required. But we designed the product so you can run your first test in 3 minutes. That first-session experience is everything. If someone can’t see value in one sitting, a longer trial won’t save it.

How trial length affects conversion

Conventional wisdom says shorter trials create urgency and convert better. Makes intuitive sense. Tighter deadline, more motivation, right?

The data is more complicated than that.

Recurly’s 2024 report (2,200+ brands, 58 million subscribers) found that 7-day-or-shorter trials hit a 78.6% conversion rate. Highest of any duration. But their dataset is 90% credit-card-required trials in consumer categories. So the high conversion likely reflects the opt-out model, not the short duration.

Then there’s the only real scientific study on this. Researchers at Frontiers in Psychology ran a proper randomized controlled trial. 680,000 users across 190 countries. They compared 3-day trials to 7-day trials. The result?

Longer trials increased trial adoption by 11%. More people signed up. But they had no meaningful effect on immediate conversion. The 7-day group didn’t convert better than the 3-day group.

What they did find: longer trials boosted delayed reactivation (people coming back and subscribing later) by 42%. So longer trials plant seeds. They just don’t create urgency.

Lincoln Murphy, a SaaS customer success consultant, has been saying this for years: “Very rarely does the length of the trial have a major impact on conversions.” When companies shorten trials and see better results, he argues, the improvement comes from the team working harder on onboarding. Not from the shorter deadline.

ChartMogul’s data shows the market has voted: 62% of products use a 14-day trial, 14% use 7-day, and 14% use 30-day. But “most popular” and “best performing” aren’t the same thing.

If you’re not sure what length works for your product, don’t guess. Set up a free A/B test and run two trial lengths side by side. Let your own data decide.

How to improve your free trial conversion rate

These are the moves that actually work, based on the research above.

Nail the first session. The Day 0 data makes this your biggest opportunity. Can a new signup see value in the first 5 minutes? If your product requires a 30-minute setup before anything useful happens, that’s where you’re losing people.

Not at the paywall. At the setup screen.

Reduce the steps between “I signed up” and “oh, this is useful.” Every extra screen, form field, or configuration step is a chance for someone to close the tab.

Your trial model matters more than you think. Established brand with strong word-of-mouth? Requiring a credit card (opt-out) will give you higher conversion rates. Unknown and building awareness? Skip the credit card.

ChartMogul found that 80% of SaaS products use opt-in (no credit card) trials. More signups means more chances to prove your product works.

Trigger upgrade prompts based on actions, not time. Don’t send a “your trial is ending” email on Day 12. Send it when someone completes a key action.

Kyle Poyar’s research (OpenView Partners) found that only 20–30% of new signups reach activation, even at the best product-led companies. The ones who do activate? Your best conversion candidates. Talk to them.

Most A/B testing and conversion rate advice focuses on signup pages. But the real conversion happens inside the product. Test your onboarding flow, your upgrade page, your pricing presentation. You can test these changes in a few minutes without waiting for a developer.

One more thing. Trials are getting less effective. Recurly’s 2025 report (67 million subscribers) shows free trial conversion fell from 46% to 33% year-over-year. Subscription fatigue is real. People sign up for more trials and commit to fewer of them.

The same study found that feature-based promotions (showing off a new capability during the trial) outperformed price discounts. People don’t need a cheaper offer. They need a reason to believe the product is worth it.

This is where conversion rate optimization becomes less optional and more essential. The days when a decent trial experience “just worked” are fading. Active testing and iteration are what separate the products that convert from the ones that don’t.

Free trial vs freemium: which converts better

This isn’t a “which is better” question. It’s a “which fits your business” question.

| Factor | Free trial | Freemium | Hard paywall |

|---|---|---|---|

| Signup rate (website to account) | ~5% | ~9% | Lowest |

| Conversion rate (to paid) | 8–25% | 2–5% | 12% (apps) |

| Support load | Moderate (time-limited) | High (forever-free users) | Low |

| Best for | Complex products, higher price | Simple products, low price | Strong brands, mobile apps |

Data sources: Lenny Rachitsky/OpenView/Pendo survey of 1,000+ products; RevenueCat for mobile app data.

Free trials get a lower share of website visitors to sign up (5% vs 9% for freemium). But they convert those signups at much higher rates. It’s a quality vs quantity trade-off.

The surprise in the data: RevenueCat found that hard paywalls (pay before you use anything) convert at 5.5x the rate of freemium for mobile apps (12.11% vs 2.18%). Turns out, making something free doesn’t automatically lead to more paying customers.

Hybrid approaches exist too. Lenny’s survey found that 44% of free-trial companies have a salesperson reach out to more than half of their trial signups. That’s not pure product-led growth. That’s product-led with a human assist.

When to pick which:

- Simple product + low price: Freemium. Let people use it forever and convert the ones who need more.

- Complex product + higher price: Free trial. Give people enough time to see value, then ask for the upgrade.

- Strong brand + mobile app: Consider a hard paywall. If people trust you, they’ll pay to try.

- Not sure: Start with a free trial. You can always add a freemium tier later. Going the other direction (removing free access) is harder.

For a deeper look at how these models compare across the SaaS world, check our SaaS conversion rate benchmarks.

FAQ

What is a good free trial conversion rate?

It depends on your model. For opt-in trials (no credit card), ChartMogul’s 2026 data shows 8–15% is good and 15–25% is great. For opt-out trials (credit card required), 25–35% is good and 50–60% is great. Freemium products converting at 3–5% is normal.

If someone tells you a single number without specifying the trial model, ask which kind. The answer changes everything.

What is a good freemium conversion rate?

3–5% is solid. 8–12% is excellent. Slack’s famous 30% freemium conversion is an extreme outlier, not a benchmark.

Most freemium products sit well under 10%, and that’s fine. Freemium is a volume play. You’re trading conversion rate for a larger top of funnel.

For broader context on what counts as a good conversion rate, it always depends on what you’re measuring and where in the funnel you’re looking.

How long should a free trial be?

14 days is the most common (62% of products, per ChartMogul). But the academic evidence suggests trial length matters less than your first-session experience. A well-onboarded 7-day trial can outperform a neglected 30-day trial.

If you’re unsure, pick 14 days and focus your energy on making Day 0 great. Then test a different length and see if it moves the needle.

Is a 2.5% conversion rate good?

Context matters. For a no-credit-card free trial, 2.5% is below average (you’d want 8%+). For a freemium model measuring total-users-to-paid, 2.5% is within normal range. For your goal conversion rate, it depends entirely on which goal you’re measuring.

Before panicking about a low number, make sure you’re comparing it to the right benchmark. Use the CVR tables above and match your model type.

Free trial vs freemium: which converts better?

Free trials convert at higher rates per signup (8–25% vs 2–5%). But freemium generates nearly twice the signups. Neither is universally “better.”

Pick based on your product complexity, price point, and support capacity. Product needs explanation? Free trial. Self-explanatory? Freemium can work. For app store conversion benchmarks, the dynamics shift again because app stores handle payments differently.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts