AI A/B testing uses machine learning to help you design, run, and analyze your tests faster. You stop manually picking what to test, writing every headline yourself, and waiting weeks for results. AI handles parts of that for you.

That’s the short version. The longer version is more interesting (and more honest).

Some AI features genuinely save you time and improve your testing methodology. Others are marketing fluff slapped onto enterprise tools you can’t afford. And a few can actually make your results worse if the math behind them isn’t solid. We’ve spent a lot of time digging into which is which.

What is AI A/B testing?

Traditional A/B testing is hands-on. You decide what to test. You write both versions. You split visitors 50/50. You wait until your sample size formula tells you enough people have seen each version. Then you read the numbers yourself.

AI A/B testing keeps the same basic framework, but automates parts of it. Think of it like cruise control, not self-driving. You still pick the destination. AI just takes over some of the steering.

The “AI” label covers a lot of ground, though. Some tools use real machine learning to spot winners earlier by checking results as data rolls in (a method called sequential testing). Others just bolt a chatbot onto their settings page and call it AI. Big difference.

A/B testing is still about comparing two versions and letting real visitors pick the winner. Methods that update their math as results come in (Bayesian A/B testing) do the heavy lifting. AI just touches the workflow around it. We wrote a separate guide on AI conversion rate optimization if you want the broader picture.

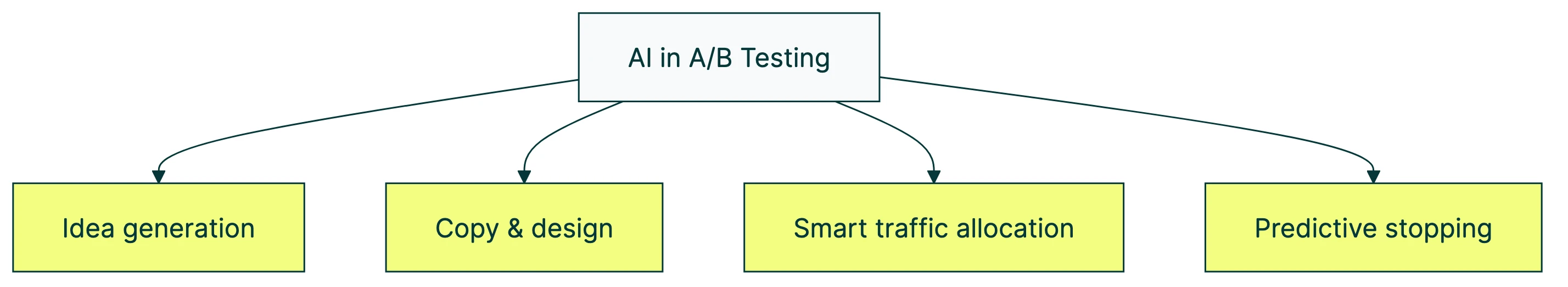

4 ways AI is changing how you run tests

This is where automated A/B testing gets real. Some of these features are available today. Others are mostly promises on pricing pages.

1. AI-generated test ideas

The hardest part of testing isn’t the setup. It’s figuring out what to test.

AI tools can scan your page and suggest changes worth testing. VWO Copilot looks at your webpage and generates a prioritized list of ideas. Kameleoon’s AI Copilot and AB Tasty’s Hypothesis Copilot do similar things.

Optimizely’s data backs this up. Their 2025 Opal Benchmark Report found roughly 11% of tests started from AI-generated ideas. Another 19.5% were follow-ups based on AI insights. That’s not nothing.

AI is good at spotting patterns in your data. It’s bad at understanding why your customers buy. The best test ideas still come from talking to real people, reading support tickets, and watching session recordings. AI is a brainstorming partner. Not a replacement for customer insight.

Our take: Use AI to generate your test backlog. But don’t skip customer research. The AI can tell you what’s broken. It can’t tell you why your visitors care.

2. AI-written copy and design

Large language models (the technology behind tools like ChatGPT) can write headline variants, button text, and email subject lines. Fast.

Optimizely reports 89.5% of their teams use AI-generated text. 52.6% use AI-generated images. Klaviyo uses AI for email subject line testing and send-time testing (picking the best time to email each subscriber automatically).

This is genuinely useful for multivariate testing, where you need dozens of headline and button combinations. Writing 20 variants by hand is tedious. Having AI generate them, then editing for brand voice, is a smarter workflow.

The catch: AI copy tends to sound like AI copy. If every version of your headline reads like a LinkedIn post, your test isn’t really comparing anything meaningful. Edit the output. Make it sound like you.

3. Smart traffic allocation

Traditional A/B testing splits your visitors 50/50 and waits. That’s clean. That’s reliable. But it also means half your visitors see the losing version for the entire test.

AI-powered traffic allocation (the technical name is multi-armed bandit testing) shifts visitors toward whichever version is winning. If Version B is pulling ahead after 1,000 visitors, the algorithm sends more people to B. Less wasted traffic. More conversions during the test itself.

A more advanced version, contextual bandits, goes further. It routes different visitor groups to different versions based on device type, location, or time of day.

The tradeoff is real, though. Bandits are great at making more money during the test. They’re worse at giving you a clean, confident answer about which version actually wins long-term. Yelp’s engineering team found two specific biases when they tested bandit systems. One skews early results. The other makes converged results look more certain than they actually are.

If you need to know the truth, run a fixed split. If you need to stop losing revenue while you learn, consider a bandit. Pick based on what matters more to you.

4. Predictive winner detection (AI early stopping)

This one gets the most hype. It also does the most damage when done wrong.

AI watches your test results in real time and predicts when a winner emerges, so you don’t have to wait for a full sample. Netflix uses confidence sequences (a math framework that stays accurate even when you peek at results mid-test). Spotify compared five different sequential testing frameworks and found big differences in how they perform.

Done right, with proper sequential statistics and methods like CUPED (a technique that reduces noise in your data), this can cut your test duration in half. Without sacrificing accuracy.

The wrong version? It’s just peeking with a fancy label. And peeking is how you get bad results.

What AI gets wrong about testing

Every vendor pitches AI testing as a pure upgrade. Faster results. Better insights. More winners. They’re not mentioning the failure modes.

The peeking problem, now automated. When you check test results before enough visitors have come through, you’re more likely to see a “winner” that isn’t real. Evan Miller’s classic analysis showed that daily peeking on a 10-day test inflates your false positive rate to about 17%. That means roughly 1 in 6 “winners” isn’t actually better. With some Bayesian approaches, that number can hit 80%.

AI early stopping can make this worse, not better. If the algorithm stops your test the first time the numbers look good, you’re just peeking faster. The difference between false alarms and real misses matters when you’re betting real money on the result.

AI models that look great on paper, fail in practice. Booking.com’s research team studied 150 machine learning models and found that 68% showed no improvement in A/B tests, despite performing well in offline evaluations. The model looked promising on historical data. Real visitors didn’t care.

Read that again. More than two-thirds of their AI models did nothing. AI can suggest things to test. But you still need a clean test to find out if they actually work. A/B testing isn’t something AI replaces. It’s something AI needs.

Novelty effects inflate early results. Visitors react to anything new. A dramatically different headline might get more clicks in week one, then settle back to baseline by week three. AI early stopping can lock in these novelty-inflated results before reality corrects them.

Organizational pressure is the real enemy. The biggest source of false positives isn’t the statistics. It’s your boss asking “do we have a winner yet?” on day two. AI makes it easier to peek, which makes it easier to cave to that pressure. Gartner’s 2025 Hype Cycle placed AI-augmented testing in the “Trough of Disillusionment” with only 5-20% market penetration. The tech is real. The maturity isn’t there yet.

Our take: The common A/B testing mistakes don’t go away because AI is involved. They just get automated. A good testing tool gives you honest numbers, not fast ones.

Which A/B testing tools actually have AI features

Every A/B testing tool now claims to have “AI.” The difference between tools is what their AI actually does versus what their marketing page says it does.

| Tool | AI hypothesis generation | AI copy/design | Smart traffic (bandits) | Predictive stopping | Pricing tier |

|---|---|---|---|---|---|

| VWO | Yes (Copilot scans pages) | Yes (copy suggestions) | Yes (bandits) | Yes (Bayesian) | Growth+ |

| Optimizely | Yes (Opal, deep) | Yes (text + images) | Yes (bandits) | Yes (Stats Engine) | Enterprise |

| AB Tasty | Yes (Hypothesis Copilot) | Limited | Yes (bandits) | Yes | Enterprise |

| Kameleoon | Yes (AI Copilot) | No | Yes (predictive targeting) | Yes | Enterprise |

| Statsig | Limited (AI summaries) | No | Yes (autotune) | Yes (sequential) | Free tier exists |

| Eppo | No | No | No | Yes (CUPED, sequential) | Enterprise |

Notice a pattern? The most useful AI features (idea generation, copy writing) are locked behind enterprise pricing. If you’re a small team, you probably can’t access them without a sales call.

Statsig is the exception: free tier, sequential testing included. OpenAI bought Statsig for $1.1 billion in September 2025. A billion dollars for a testing tool. That says something.

Eppo focuses on statistical rigor over AI features. Datadog acquired them. Both are built for engineering teams, not solo marketers.

If you’re a small business owner or solo marketer, most AI split testing features are out of reach. The tools that are accessible (like Kirro) focus on getting the fundamentals right: easy setup, clean statistics, and results you can trust. Not as flashy as “AI-powered.” But it’s what actually helps you make better decisions.

When AI testing makes sense (and when it doesn’t)

AI testing isn’t for everyone. Quick gut check.

AI testing is a good fit if you have:

- 10,000+ monthly visitors (AI needs data to learn from)

- A team running 5 or more tests per month

- Large search spaces (testing many combinations at once)

- Email or SMS campaigns at scale

AI testing is a poor fit if you have:

- Fewer than 1,000 monthly visitors

- Never run an A/B test before

- One-off decisions (just pick the better version manually)

- A need to understand why something worked, not just which version won

An Evolv AI study published in AI Magazine found that AI-driven design evolution improved conversions by 43.5-46.6%. That sounds amazing. But that study ran on 599,008 visitor interactions over 60 days. Most small businesses don’t have that kind of traffic.

Microsoft runs 10,000+ tests per year across Bing, Office, and Teams. At that scale, AI features pay for themselves. At 2,000 visitors a month? You’re better off with a simple A/B test and good statistical power.

Start with traditional A/B testing. Learn the basics. Build the habit. Then add AI when you’ve got the traffic and velocity to support it.

How to start using AI in your next A/B test

You don’t need to buy an enterprise platform to benefit from AI in your testing. You probably already have everything you need.

First, get the basics right. Install a testing tool. Define your conversion goal (what you want visitors to do). Run one simple headline test. If you’ve never done this, set up your first test in about three minutes. Don’t skip the fundamentals.

Then use AI for brainstorming. Paste your page URL into ChatGPT, Claude, or any LLM. Ask: “What would you change on this page to increase sign-ups?” You’ll get 10 ideas in 30 seconds. Most will be obvious. One or two might surprise you.

Now let AI write your test versions. Ask it for five headline options for your landing page. Pick the two most different from your current headline. Test those. You just created a test without staring at a blank page.

The tool matters at this point. Use something with proper Bayesian or sequential stats, not a tool that just checks if the numbers “look significant.” A clean statistical engine is the difference between a real result and a coin flip that happened to look good. Kirro uses Bayesian statistics to give you honest answers, even with smaller traffic. AI suggests what to test. Your testing tool tells you if it actually worked.

And keep your brain turned on. AI can spot patterns in your data. It can’t understand your customer’s emotional triggers, their fears, or what makes them trust you. AI output is a starting point. Never the final answer.

Want to try it? Create a free test and pit an AI-generated headline against your current one. Low risk, real data. Our guide on how to design a marketing experiment covers how to run cleaner tests as you go.

FAQ

How does AI improve A/B testing?

AI touches four stages: generating test ideas, writing copy variants, allocating traffic, and detecting winners. The biggest impact is speed. Optimizely’s 2025 data shows teams run 78.7% more tests with AI assistance and finish them 53.7% faster. But speed without accuracy is worse than slow.

Can AI predict A/B test winners?

Yes, through methods that monitor results as they come in instead of waiting until the end. But prediction accuracy depends entirely on the math behind it. The best approaches (used by Optimizely, Uber, and Netflix) stay accurate even when you peek early. “Stop when the numbers look good” approaches without proper math inflate false positives from 5% to 17% or higher.

What A/B testing tools use AI?

VWO (Copilot), Optimizely (Opal), AB Tasty (EmotionsAI), Kameleoon (AI Copilot), and Statsig (now owned by OpenAI) all have AI features. Most require enterprise pricing. Statsig has a free tier. For teams that want solid testing without the complexity, Kirro focuses on clean stats and simple setup rather than AI bells and whistles.

Is AI replacing A/B testing?

No. If anything, A/B testing is more important because of AI. Booking.com’s research showed 68% of ML models that improved offline metrics showed no business impact when tested on real visitors. AI creates more things to test. You still need a rigorous test to know if they work.

What is the difference between AI testing and traditional A/B testing?

Traditional A/B testing uses a fixed traffic split (usually 50/50) and waits for a set number of visitors. AI testing can generate test ideas, write variants, shift traffic dynamically, and detect winners sooner. The core principle is the same: show real visitors two versions and measure which performs better. AI changes the workflow around the test, not the test itself.

What is automated A/B testing?

Automated A/B testing (sometimes called AI split testing) is A/B testing where software handles tasks that used to be manual. That might mean auto-generating headlines, auto-allocating traffic, or auto-stopping tests when results are clear. “Automated” is a spectrum. Some tools automate one step. Others try to automate the entire process. The best A/B testing software gives you automation where it helps and control where it matters.

How much traffic do you need for AI A/B testing?

More than traditional testing. Bandit algorithms, predictive stopping, and AI-driven personalization all need large datasets to work well. Evolv AI’s published study used 599,008 interactions. Most AI features need at minimum 10,000 monthly visitors to be useful. If your minimum detectable effect is small, you’ll need even more.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts