Meta A/B testing is a built-in tool inside Ads Manager. It splits your audience into separate groups and shows each group a different version of your ad. Then it tells you which one performed better. You can test creative, audiences, placements, and more across both Facebook and Instagram.

That’s the simple version. The complicated version? Meta’s algorithm quietly interferes with your tests. Most guides skip that part. We won’t.

Meta claims that winning A/B tests drove 30% lower cost per result on average. That number is real. But the way Meta measures “winning” has some serious holes that most advertisers never hear about.

This guide covers the three ways to test on Meta. Most articles only know one. We also get into the hidden costs nobody mentions and why a 2025 academic study found that Meta’s tests aren’t quite the real deal. For the broader A/B testing fundamentals and platform-specific testing strategies, we cover those separately.

What is Meta A/B testing (and what it actually does)

If you’ve ever run two ads side by side and compared the numbers, you’ve probably thought you were A/B testing. You weren’t. Not really.

The difference is audience isolation. When you just run two ads in the same campaign, Meta’s algorithm decides who sees what. It might show your “better” ad to warmer audiences. Your test ad? It goes to people who were never going to click. The comparison is rigged from the start.

Meta’s A/B testing tool fixes this by creating non-overlapping groups. Each person only sees one version. Nobody sees both. That’s what makes it a real test instead of just running two ads and hoping for the best.

You can test five things: creative (images, video, copy), audience, placements, product sets, and post-click experience. If you’re looking for what split testing means in general, it’s the same idea. Show two versions. Measure. Keep the winner.

If you’re curious how other platforms handle this, our Amazon A/B testing guide covers the e-commerce side of things. And if you’re running search ads alongside social, our Google Ads A/B testing guide walks through how to test campaigns on Google’s platform.

The 3 ways to test ads on Meta (most guides only cover one)

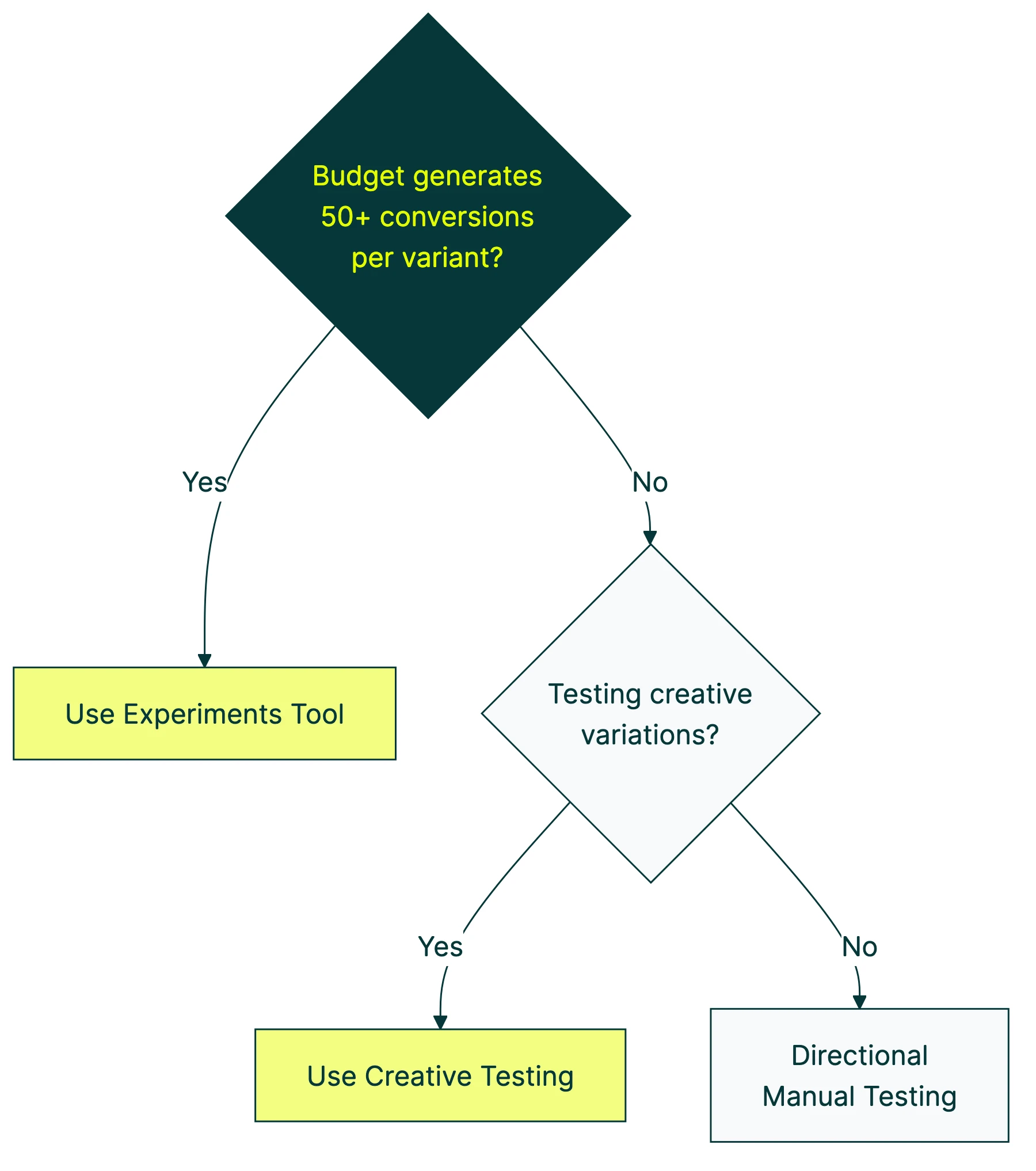

Most articles about Facebook A/B testing act like there’s one way to do it. There are three. Each has different rules and different strengths.

Method 1: the Experiments tool

This is Meta’s dedicated testing feature. You’ll find it under Analyze & Report in Ads Manager. It runs a structured test for 7 to 30 days. Best for clear, one-variable tests with a specific question in mind.

The catch: you need about 50 conversions per variation to get a reliable answer. If your budget can’t generate that volume, this method will just burn cash without telling you anything useful.

Method 2: creative testing (launched October 2025)

Meta’s newest option. It tests 2 to 5 ad variations within a single ad set and splits the budget equally between them. Identifies strong performers within 48 to 72 hours.

This is the best option for creative iteration. You’re not testing a big strategic question. You’re just figuring out which image or headline gets more clicks. Fast and practical.

Method 3: directional testing (manual)

Duplicate your ad set. Change one thing. Compare in reporting. Less scientific than the other methods, but it works when your account doesn’t hit the conversion minimums for a proper test.

| Experiments tool | Creative testing | Directional (manual) | |

|---|---|---|---|

| Best for | Strategic questions | Creative iteration | Small accounts |

| Variations | 2 | 2 to 5 | 2 |

| Time to results | 7 to 30 days | 48 to 72 hours | Varies |

| Min. conversions | 50 per variation | Lower threshold | None |

| Budget control | High | Medium (equal split) | Full manual |

Our take: Start with Method 2 if you’re testing creative. Use Method 1 when you have a specific question and the budget to answer it. Method 3 is for smaller accounts that can’t afford to wait for confidence. No shame in it.

For a detailed walkthrough of how to run Facebook ad tests correctly, Ben Heath covers the process:

For a broader look at what’s available beyond Meta’s built-in tools, check our guide to the best A/B testing tools.

How to set up a Facebook A/B test (step by step)

Here’s the actual walkthrough for Facebook AB testing using the Experiments tool.

Step 1: Pick what to test. Start with creative. It’s almost always the highest-impact variable. A different image can move your click-through rate more than any audience tweak.

Step 2: Write a test statement in plain language. Something like: “I think a video ad will get more purchases than a static image because people stop scrolling for movement.” This keeps you honest about what you’re actually testing.

Step 3: Open Experiments. In Ads Manager, go to Analyze & Report, then Experiments. Select “A/B Test.”

Step 4: Choose your setup. You can duplicate an existing campaign or compare two that are already running.

Step 5: Set your variable, budget, and duration. Only change one thing. If you change the image AND the headline, you won’t know which one made the difference. That’s a common A/B testing mistake and it wastes money every time.

Step 6: Launch and walk away. Resist checking for at least 4 to 7 days. Seriously.

The budget formula: Take what you currently pay per customer action (your CPA), multiply by 50, multiply by the number of versions. If your CPA is $10 and you have 2 versions, budget at least $1,000. This comes from the sample size math that makes tests reliable.

What to test first (and the order that matters)

Not all tests are created equal. There’s a hierarchy. Go in this order for the biggest impact per dollar spent:

- Creative format (image vs. video vs. carousel)

- Offer or value proposition (what you’re actually selling or promising)

- Ad copy angle (emotional vs. logical, problem vs. solution)

- Headline and call to action

- Audience

- Placements

Creative comes first because it’s what stops the scroll. You can have a perfect audience, but if the ad is boring, nobody clicks. Jon Loomer tested this and found that Flexible Format with images outperformed carousel ads with two cards. The format matters more than most people think.

One variable at a time. Always. If you change the image and the headline together and the ad does better, great. But you have no idea which change caused it. That’s not a test. That’s a coin flip. Testing multiple things at once requires multivariate testing. That needs way more traffic than most Meta advertisers have.

And don’t test things that won’t produce enough data to measure. If you only get 20 clicks a day, testing CTA button text is pointless. You’ll wait three weeks and learn nothing.

The ad gets people to click. But the landing page gets them to convert. That’s where tools like Kirro pick up the story, testing what happens after the ad. If your A/B testing conversion rate is flat despite winning ads? The landing page is probably the bottleneck.

How long to run your test (and what “confidence” actually means)

Meta says 7 to 30 days. In practice, 7 days minimum (to account for how people behave differently on weekdays vs. weekends), 14 days ideal, 30 days maximum before fatigue sets in.

Most guides gloss over this part. Meta uses two confidence thresholds: 65% for early winner detection and 90% for declaring a final winner. Neither hits the scientific standard of 95%.

What does 90% confidence mean in plain English? If you ran this exact test 10 times, the same version would win 9 times. One time out of 10, you’d pick the wrong answer. That’s decent for ad decisions. But it’s not gospel.

This is similar to how Bayesian testing works. Instead of waiting for a hard “yes” or “no,” you’re working with probabilities. Meta’s approach gives you a directional answer, not a courtroom verdict.

And please, don’t stop your test early because one version looks like it’s winning on day 2. Early results are noisy. Peeking at data mid-test and making decisions is one of the most common A/B testing mistakes in the book. False positives spike when you peek.

The dirty secret about Meta A/B tests (why they’re not always reliable)

This is the part nobody talks about. And it’s kind of a big deal.

Researchers at SMU and the University of Michigan published a 2025 study in the Journal of Marketing. They found something called “divergent delivery.” In plain language: Meta’s algorithm doesn’t show your two ad versions to truly random groups. It targets different types of people for each version.

Think of it like a basketball game where one team gets to play against easier opponents. They “win,” but did they actually play better? Or did they just have a softer schedule?

Ron Kohavi ran A/B testing at Microsoft and Airbnb. He’s the most credentialed person in this space. He called Meta’s tests “observational studies with confounds, not real experiments.” A polite way of saying they’re not as trustworthy as Meta implies.

A UBC Sauder study added another wrinkle. The average Facebook user is unknowingly part of about 10 experiments at any given time. Your test isn’t running in a vacuum.

Bottom line: Meta’s A/B tests are directionally useful, not scientifically definitive. They tell you “this probably works better.” For most ad decisions, that’s good enough. But treat results as strong hints, not proof.

You can make them more reliable by using larger budgets, running longer tests, and testing on ABO (Ad Set Budget Optimization) campaigns instead of Advantage+ where Meta has even more control over delivery.

Our take: Meta’s tests are still worth running. They’re the best tool available inside the platform. Just don’t treat a 90% confidence result like a guarantee. It’s more like a really well-informed guess.

Advantage+ and why it breaks standard testing

Most Meta advertisers now use Advantage+ campaigns. Think of it as Meta’s autopilot mode. You give it a goal, a budget, and some creative. Meta handles the rest: audience targeting, placements, budget allocation.

The problem? You can’t properly test on autopilot. Advantage+ gives Meta control over audience targeting, placements, and budget distribution. Those are the exact variables you’d want to hold constant during a test. When Meta shifts all of those behind the scenes, your results get muddied.

Running a split test inside an Advantage+ campaign is like trying to test which recipe is better while someone keeps changing the oven temperature. You’ll get a result. You just can’t trust it.

The fix: use ABO (Ad Set Budget Optimization) campaigns for your controlled tests. ABO lets you set the budget at the ad set level, which gives you much more control over who sees what. Once you have a winner, apply it to your Advantage+ campaigns.

Meta’s new Creative Testing feature (October 2025) was built for this AI-heavy world. It tests 2 to 5 variations with equal budget splits, sidestepping some of the Advantage+ interference. If you’re deep in the Advantage+ ecosystem, this is your best testing option right now.

The hidden costs of testing (CPM spikes and learning phase resets)

Every guide tells you to test your ads. Almost none of them mention what it actually costs.

When you launch a test, each variation enters Meta’s “learning phase” separately. That’s the period where the algorithm figures out who to show your ad to. During this phase, what you pay per 1,000 ad views can spike 2 to 3 times higher than normal. Your return on ad spend typically plunges before recovering.

Testing isn’t free. Even if the test itself doesn’t cost extra, the temporary performance dip is a real cost. Budget for 3 to 5 days of higher costs per variation and don’t panic-stop tests during the learning phase. That just resets the clock.

When NOT to test: If your monthly ad budget is under $500, the testing cost may eat your entire margin. At that budget level, you’re better off following creative best practices and skipping formal tests entirely. Focus your testing energy on the landing page instead, where you can test without paying Meta a premium.

2025 benchmarks from WordStream put the average cost per click at $0.70 and cost per lead at $27.66 (up 21% from last year). Do the math on that. At $27.66 per lead, hitting 50 conversions per variation means spending $2,766 minimum on a two-way test. That’s the real price of reliable data. For a full breakdown of Facebook Ads conversion rate benchmarks by industry and campaign type, we have a dedicated guide.

What to do after your test (scaling winners without losing momentum)

You found a winner. Now what?

First, use the Post ID trick. When you move a winning ad to a new campaign, don’t recreate it from scratch. Find its Post ID in Ads Manager and use that to preserve all the social proof: likes, comments, shares. Starting fresh means starting at zero engagement, which hurts delivery.

Then watch the scaling trap. What works at $50 per day doesn’t always work at $500 per day. Meta’s algorithm behaves differently at different budget levels. Scale by 20 to 30% every few days instead of jumping straight to 10x.

And keep a test log. Write down what you tested, what won, by how much, and what you learned. A simple spreadsheet works. After 10 tests, patterns show up. “Video always beats static for cold audiences.” “Problem-focused headlines beat solution-focused ones.” That’s how random testing becomes a system.

A naming convention helps too. Something like Campaign_Variable_DateRange keeps your Ads Manager from turning into a graveyard of “Copy of Copy of Test Ad v3 FINAL.”

Meta tests the ad, but what about what happens after the click?

Meta’s A/B testing only covers what happens inside the Meta platform. The ad creative, the audience, the placements. Once someone clicks your ad and lands on your website? Meta can’t test that.

And that’s often where the biggest gains are hiding.

A Heights brand case study showed 25 to 29.7% conversion lifts from pre-validating ad copy angles on landing pages. The ad got people to click. The landing page got them to buy. Testing only the ad is like rehearsing only the first half of a presentation.

This is where landing page split testing comes in. You test headlines, calls to action, trust signals, page layout. The stuff that turns a click into a customer.

Kirro picks up where Meta stops. Meta tests the ad. Kirro tests what happens next. You can set up a landing page test in about three minutes and see which version of your page turns more paid clicks into actual results.

If you’re spending money driving traffic with Meta ads but not testing the pages those ads point to, you’re leaving money on the table. Our CRO testing guide can help you think beyond just the ad.

How iOS privacy changes affect your Meta tests

In 2021, Apple released iOS 14.5 and gave iPhone users a simple choice: let Facebook track what you do on other apps and websites, or block it. 88% of people said “block.”

That single change broke a lot of what made Meta’s ad tracking reliable.

Before iOS 14.5, Meta could follow someone from ad click to purchase for up to 28 days. Now? Just 7-day click and 1-day view. Meta also limits you to 8 trackable events per website domain. A huge chunk of conversions simply go unrecorded.

For your A/B tests, this means more noise in your data. Some conversions happen but Meta never sees them. Your winning ad might actually be winning by more than the numbers show. Or the gap might be smaller than it looks. You just can’t be sure.

Meta’s response? Incremental Attribution, launched April 2025. Early results showed 20%+ improvement in tracking across 45 advertisers. It’s getting better, but it’s not back to pre-iOS levels.

What you can do: install the Conversions API, which tracks conversions from your server instead of relying on browser cookies. Run tests longer to collect more data. And accept that your results will have some noise baked in. Measured.com notes that Meta’s tracking is blind to non-Meta touchpoints entirely.

If this iOS tracking gap frustrates you, consider testing the landing page experience separately with a tool that doesn’t depend on Meta’s tracking. Set up a split test on your own site and measure results directly.

FAQ

What is Meta A/B testing?

Meta A/B testing is a built-in tool in Meta Ads Manager. It lets you compare two versions of a Facebook or Instagram ad to see which one performs better. It splits your audience randomly so each person only sees one version. Then it measures which one gets more of the result you care about: clicks, purchases, or signups. You can test creative, audiences, placements, product sets, and post-click experience.

How long should I run a Meta A/B test?

At least 7 days. This accounts for how people behave differently on weekdays versus weekends. Aim for 14 days if your budget allows. You also need at least 50 conversions per variation for meaningful results. If your budget can’t generate that volume in two weeks, the test may not reach a conclusion you can trust.

How much does it cost to A/B test on Meta?

The test itself doesn’t cost extra on top of your normal ad spend. But each variation enters the learning phase separately, which can spike your costs 2 to 3 times. Budget for at least CPA times 50 times the number of variations. So if you pay $10 per conversion and have 2 versions, plan for at least $1,000. Factor in the learning phase spike and the real number is closer to $1,500.

Can I A/B test on Instagram too?

Yes. Meta Ads Manager covers both Facebook and Instagram. You can run tests across both platforms or test placements (Facebook vs. Instagram) as your variable. Instagram is on track to make up over 50% of Meta’s US ad revenue in 2025. Testing there matters more than ever.

How do I turn off an A/B test on Facebook?

Go to Ads Manager, then Experiments. Find your running test, click the three-dot menu, and select End Test. You can end it early, but Meta recommends the full duration. Stopping early increases the chance of a false positive. That’s when the data says one version wins, but it actually doesn’t.

Randy Wattilete

CRO expert and founder with nearly a decade running conversion experiments for companies from early-stage startups to global brands. Built programs for Nestlé, felyx, and Storytel. Founder of Kirro (A/B testing).

View all author posts